Google is sneaking a massive 4.7GB AI model into Chrome, and Mozilla is fighting back as the future of browsers threatens to turn into an AI arms race. Find out what's really happening behind this push and why it's setting off alarm bells across the web.

- Hackers AI-code a portal, forget to add authentication.

- The UK's NCSC issues a Mythos warning. Where's CISA?

- Another (of many) Linux local privilege escalations.

- AI may be spelling the end of bug bounties.

- Anthropic releases "Claude Security" mini-Mythos.

- ChatGPT gets very serious about login security.

- Syncthing's SyncTrayzor v1 abandoned; v2 created.

- Google drops an AI API into Chrome; Mozilla objects

Show Notes - https://www.grc.com/sn/SN-1077-Notes.pdf

Hosts: Steve Gibson and Leo Laporte

Download or subscribe to Security Now at https://twit.tv/shows/security-now.

You can submit a question to Security Now at the GRC Feedback Page.

For 16kbps versions, transcripts, and notes (including fixes), visit Steve's site: grc.com, also the home of the best disk maintenance and recovery utility ever written Spinrite 6.

Join Club TWiT for Ad-Free Podcasts!

Support what you love and get ad-free audio and video feeds, a members-only Discord, and exclusive content. Join today: https://twit.tv/clubtwit

Sponsors:

[00:00:00] Cybersecurity Now. Steve Gibson is here. He is armed with the knowledge that Google is now downloading 4.7 gigabytes when you download Chrome. What is it? A local AI model. Steve talks about its implications next on Security Now. This episode is brought to you by OutSystems, a leading AI development platform for the enterprise. Organizations all over the world are creating custom apps and AI agents on the OutSystems platform. And with good reason.

[00:00:28] Build, run and govern apps and agents on one unified platform. Innovate at the speed of AI without compromising quality or control. Trusted by thousands of enterprises worldwide for mission critical apps. Teams of any size and technical depth can use OutSystems to build, deploy and manage AI apps and agents quickly and effectively without compromising reliability and security.

[00:00:52] With OutSystems, you can accelerate ideas from concept to completion. It's the leading AI development platform that's unified, agile and enterprise proven. Allowing you to build your agentic future with AI solutions deeply integrated into your architecture. OutSystems. Build your agentic future. Learn more at OutSystems.com slash TWIT. That's OutSystems.com slash TWIT.

[00:01:42] OutSystems.com slash TWIT. OutSystems.com slash TWIT. That's Block cœur. But that doesn't mean I'm not going to get Steve Gibson on the horn and talk about security because I know you need your fix. Hello, Mr. G. You know, Leo, you look a little more tan than you did last time we saw you. I am. See, my, my, my hand is light, but my face is a little dark. Do, my ties increase skin pigmentation?

[00:02:09] Maybe that's what it is. Maybe that's what it is something yeah we're on vacation but i can't you know i still want to do the shows and so uh i've set up if if you could only see this cookie setup i am outside on the lanai we hear the uh the exotic birds tweeting in the back there are some exotic birds there's minor birds there's uh house sparrows and there's a bird that looks like a little chicken

[00:02:35] uh called a falcon i can't remember the name of it but uh chicken bird and it's very noisy so you'll know if it decides but the sparrows are very aggressive they might think i have something to give them so they might be coming up here and sitting on my shoulder well it all adds to the ambiance that that's it we have with you and lisa in the big island it's so beautiful have you been

[00:02:58] to hawaii steve oh yeah i had half of my the second half of my honeymoon in hawaii i love hawaii i do so what's coming up on security now this week so episode 1077 it's funny every so every so often i think about 1077 which sort of puts the infamous 999 into context yeah now it's been a while yeah

[00:03:22] actually it's been more than a year seven seven seven miles yeah um so there were two main topics which were contesting uh for uh to win the the coveted title of the podcast this week uh google's arguably premature move to build ai into chrome ended up winning because mozilla has said

[00:03:52] not so fast yeah um but we got a lot of good things to talk about uh it turns out that some hackers used ai to code up a portal for credit card uh stolen credit card verification they forgot to ask it to add authentication so whoops uh also the the uk's uh security group the ncsc

[00:04:21] has issued their own Mythos warning which caused me to wonder where is cisa why why haven't you heard anything from cisa uh we're gonna talk we're gonna touch on that we've got another of many recent uh linux local privilege escalations this one is bad and it's affected linux for years uh and yes ai found

[00:04:44] uh also some interesting commentary about the the the ground shifting under ai and vulnerability research how it's looking like it may spell the end of bug bounties and why that is probably the right thing to have happen also anthropic has released a uh what they call claude security as a mini Mythos

[00:05:11] uh chat gpt has made some uh changes which demonstrate it's getting very serious about login security um i want to make a comment that about something i discovered uh since we last talked about the end of life of sync tracer version one which is what i use to to sort of bundle sync thing uh into a nice little applet for windows but there's a replacement for it and then we're going to talk

[00:05:41] about how google has sort of surprised everyone by just saying we think it's time that we add ai support in javascript so lots of fun things to talk about and of course a great picture of the week so yeah oh and there are a couple things that happened just now uh just so that our listeners know that i'm

[00:06:04] aware of the fact that digi cert suffered a major breach oh which allowed 30 uh ev code signing certificates to get minted behind their back oh that's not good and used however their disclosure is being called a reference a state-of-the-art this is the way you do it if you're gonna if you're gonna

[00:06:29] say what happened if you're gonna share with the industry your your post-mortem so uh they just updated it 10 minutes ago so it's still a little bit in flux we'll take a look at what they had to say uh things that went right things that went wrong and what they learned it it ended up being a social uh hack a um a malicious screensaver of all things got into two of their tech support member

[00:06:55] uh pcs and it it wasn't detected due to a crowd strike endpoint security misconfiguration so anyway i'm all up to speed on it but i just didn't have time i didn't have a chance to well actually it's still we're still learning a lot and it's still in flux so we'll have good coverage of that next week i just want to let everybody know that i was aware of that so uh we'll take our first break we'll look at the picture of the week and then we'll get into all this it really just shows you how anybody

[00:07:23] is vulnerable to this uh and and as you said when we were at the threat locker zero trust world the threats coming from inside the house a network engineer who put a screensaver on his system and suddenly you're compromised that's uh that's terrible all right well i'm not going to do the commercials from here in hawaii i'm on vacation so i'm going to let leo and petaluma take this one and then we'll be back with the picture of the week right white-skinned leo yes this episode of

[00:07:50] now brought to you by zscaler the world's largest cloud security platform you know the potential rewards of ai are too great to ignore but so are the risks loss of sensitive data and attacks against enterprise managed ai generative ai increases opportunities for threat actors helping them to rapidly create phishing lures and write malicious code automate data extraction there were

[00:08:14] 1.3 million instances of social security numbers leaked to ai applications it's time for a modern approach with zscaler's zero trust plus ai it removes your attack surface secures your data everywhere safeguards your use of public and private ai and protects against ransomware and ai powered phishing attacks don't believe me check out what siva the director of security and infrastructure at

[00:08:39] wara says about using zscaler watch ai provides tremendous opportunities but it also brings tremendous security concerns when it comes to data privacy and data security the benefit of zscaler with zia rolled out for us right now is giving us the insights of how our employees are using various gen ai tools so ability to monitor the activity make sure that what we consider confidential and sensitive

[00:09:04] information according to you know companies data classification does not get fed into the public lllm models etc thank you siva with zero trust plus ai you can thrive in the ai era you can stay ahead of the competition you can remain resilient even as threats and risks evolve learn more at zscaler.com slash security that's zscaler.com slash security now back to steve and security now thank you leo

[00:09:35] you know just to explain behind the scenes we weren't sure this was going to work at all and so we thought well i better pre-record the commercials in case uh i don't know micah had to jump in or something uh and i brought a starlink mini and all sorts of backup stuff and it turned out wow who knew in hawaii they've got cable modems high speed internet i didn't need to bring anything i'm just so it isn't a complete proof of concept of your ability to roam through like anywhere in the on the globe

[00:10:04] and use not yet not yet i probably should though you know uh set it up we have this nice lawn behind us there's plenty it's perfect space for the starlink so i probably should just set it up uh before i go home and and make sure that i can do that but you know everybody could double as a bird bath couldn't it yes it could uh or a serving tray uh all right i have and now this is going to be another interesting

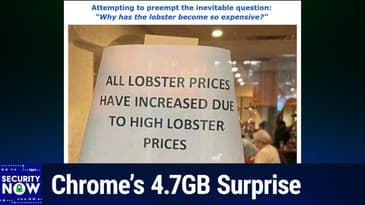

[00:10:29] experiment i have the picture of the week shall we uh share it so this was great uh shared from a listener of course as as are they all uh i gave this one the caption attempting to preempt the inevitable question why has the lobster become so expensive okay so we see a sign all right i had seen the lobster part but i hadn't seen the rest of it that's hysterical

[00:10:58] the sign is taped to the window of a buffet or a restaurant or something explaining just again preempting the inevitable question so the sign says all lobster prices have increased due to higher lobster prices and i can see in the background there's a okay a little old lady an elderly

[00:11:22] person and you know she went up to the guy at the restaurant said why are the lobster prices show high and he just pointed to the sign said ladies yeah because they're so expensive that's right all lobster prices have increased due to high lobster prices very nice very nice what are you gonna do okay um so we begin this week with a story that intersects several security fronts last wednesday

[00:11:50] cyber news headline was scammers vibe code server to verify stolen credit cards leak details of 345 000 cards uh i had to read that one twice to make sure i understood what they were saying so here's what they discovered they wrote threat actors like so many programmers around the world are no strangers to

[00:12:15] ai assisting in their operations however like so many vibe coders scammers also run into security issues on april 16th the cyber news research team discovered an exposed server owned by a threat actor the exposed information is controlled by a carding market called jerry's store as in tom and jerry there were some little cartoons of a mouse jumping around on things uh on in posted on the dark web

[00:12:43] they said the tool provides credit card validity percentages for each seller in other words threat actors use this tool to check if the stolen payment card is still operational according to our team jerry's store operators extensively used cursor an ai assisted development environment that is one of the

[00:13:06] very earliest uh ai based uh coding assistants uh some from several years back cursor they said to set up the leaking server and to not knowing that it was leaking and to create administrator facing dashboards cursor they wrote is a legitimate service developed by the u.s software company any sphere researchers

[00:13:30] believed that relying on an ai assistant to set up the server was the reason it was exposed based on the chat logs our team was able to access the threat actor received flawed instructions from their ai imagine that for building the dashboards the team explained quote we were able to confirm the leak originated from the user

[00:13:54] the user asking to create a statistics dashboard and cursor created an unauthenticated open web directory to serve the web page ignoring the need because of course you didn't ask for it ignoring the need to set up authentication or ensure that only the intended dashboard would be accessible in other words it's just like a regular user if you don't ask for authentication you're not going to get authentication

[00:14:21] anyway they they finished saying moreover the chat history reveals there was sufficient information for the cursor large language model to identify that it was helping set up a credit card verification service indicating a lack of sufficient guard rails to prevent abuse and as you've often heard me say i don't think you can really control a large language model researchers said quote it's a lesson

[00:14:49] for developers using cursor for legitimate uses showing how it can lead to accidental data leaks right it's just going to write what you ask it to it's not going to like be your security nanny so cyber news said that they'd reached out to cursor for comment and would update their article with any additional information they receive the fact that the cursor ai produced a statistics dashboard driven by an unsecured and

[00:15:17] open web directory allowing unauthenticated remote access i think it's a great example of the danger of using ai without being a domain expert that is you know without knowing what to ask for because you you know it'll give you what you ask for but you need to know what that is uh i've no doubt that the cursor ai would have

[00:15:41] easily provided instructions for the authentication that was needed if it had been asked to but apparently the bad guys never thought to ask um so somebody who wasn't really up to speed on web-based application security could easily fall you know or fail to anticipate all the various ways others might access and

[00:16:08] penetrate their system so you know use expecting ai to produce secure solutions by default it's probably a fool's errand in this case either it never occurred to them that authentication should be required where it was absent or they didn't know it was going to be absent or they assumed that the ai would know you know like what it should do and would do it without bidding um the cyber news article also

[00:16:38] provided some interesting background reporting a little bit on the underground industry in stolen credit cards which i thought was interesting they wrote operations such as jerry's store are integral to the cyber crime infrastructure once scammers obtain stolen credit card information they need to verify which cards can still be exploited jerry's store provides that service our team noticed that to complete

[00:17:05] the task jerry's store operators use legitimate well-known merchants the cyber news team explained quote threat actors used multiple legitimate merchant websites such as amazon us amazon japan grubhub sam's club timu lift elf cosmetics and country max utilizing hundreds or in some cases thousands of accounts that have

[00:17:33] already been established on these platforms to perform credit card validity checks attackers created those accounts to register stolen cards and then perform low risk actions as they call them these could include adding cards as a payment method or making a very small purchase if the platform accepts the card threat actors mark the card as valid and sell it to other threat actors on the dark web using large merchants like amazon

[00:18:02] or grubhub of course is a way to mask their activity since large merchants process billions of payments tiny transactions on a well-known website don't ring any alarm bells they wrote according to our team the exposed server contained a treasure trove of credit card details details meaning you know everything you need to

[00:18:23] process someone's card researchers identified nearly 200 000 credit card details that the service had verified as valid uh and over 145 accounts i'm sorry 200 000 that were invalid and over 145 000 accounts that they had verified verified as valid now the exposed information includes all the details that you need credit card

[00:18:51] numbers expiration dates the security code the card holder's name and their address typically they wrote valid credit card details are sold for between seven dollars and eighteen dollars each on the dark web meaning that the value of the valid stolen the valid stolen card data that's the 145 000 cards that are there have been verified there is somewhere between a million and two point six million dollars

[00:19:18] they said however our team added that the actual value of the exposed infrastructure may be a lot higher since jerry's store sells much more than just credit card data that's just one of the the types of you know uh fraud that they're that they're they're uh uh making available in the store they said while it's unclear where jerry's store is located internal tooling and leaked large language model chat logs suggest

[00:19:47] that the marketplace's administrator is fluent in chinese the server itself appears to be hosted in germany by a suspected bulletproof hosting provider the marketplace which yeah the marketplace which launched in late 2023 is a well-known credit card vetting tool within the cybercrime underground aimed primarily at cards

[00:20:09] stolen from victims in the u.s and the eu fluent chinese but not ai apparently you know i this comes up a lot we're going to see more of these whoops whoa so hold on camera i'm over here thank you uh nice people blame ai for stuff that they do that's dumb there was a big story last week and everybody blamed ai because the

[00:20:34] guy's database production database got clobbered but of course uh if you give ai the keys to your production database it's on you my friend and if you're dumb enough to say hey just make me a website to authenticate uh but but but don't put any authentication layers in there ai is going to do what you say it's it's i think this comes from sort of a magical belief about ai that it's somehow intelligent or or or you

[00:21:00] know it's going to take care of you and it's not um and probably it's a hope as as much as a whole leaf yes it's like i hope that ai knows how to do this and since it seems to know a lot i'm just going to assume that it does well it does if you tell it to it will i mean you're absolutely right if you say ai is great at oauth if you say write the login page use oauth make it secure it will absolutely do that

[00:21:27] but you have to tell it to it's not going to assume that it might and but again it might not the other the other thing i wanted to mention is i have had credit cards stolen due to my own stupidity and the very first of all credit card companies know to look for those low risk low value charges you know right in fact they used to say if somebody buys sneakers and then tries to fill up a tank of gas they invalidate that credit card immediately because that's the first thing somebody steals a credit

[00:21:53] card is going to do that's times have changed that's not true anymore when i credit my credit card was stolen i mentioned this before they added it to an apple wallet so they had a prior set up apple account and added it to an apple wallet which they then used the credit card through obscuring the source of the credit card the actual credit cards very i thought very clever

[00:22:18] and i should have known because when i gave it the six digit code it said okay we're trying to add this to that apple wallet and i said what are you talking about i'm not trying to do an apple wallet that should have been the hint to me that they were doing something funny um there you know it's a cat and mouse tom and jerry kind of a game yeah it does feel like um as i have said on the podcast

[00:22:40] before um for like i mean in the early days probably almost before this podcast i used to fly up to northern california to to visit my family in northern california for the holidays and and this was you know pre-expedia and so forth so i actually had a travel agent from the old old old days who i just kept around and when we would have our conversation um she would invariably say well so steve do you have

[00:23:08] the same credit card or have you lost that one too uh because i was out on the internet poking around and it's like oh yeah i don't feel so bad now it did get good yeah i did lose that one so okay so uh last friday ollie whitehouse the chief technology officer you know the cto for the uk's ncsc their

[00:23:35] national cyber security center issued a clear warning um at the level of the government ollie's warning posting was titled preparing for a vol vulnerability patch wave and it carried the tagline organizations must act now to prepare for a wave of patches that will address decades of technical debt

[00:24:01] and i love that term in this in this instance the i think that the the term technical debt is exactly the right way to express the concept that you know the piper may be about to get paid uh i have a friend from the midwest whose favorite term for this would be they're about to get there just comeuppance uh

[00:24:23] yes comeuppance indeed so here's what the uk's ncsc cto wanted everyone within the united kingdom within his his sphere of influence to appreciate he wrote whether they are technology producers and vendors or consumers and operators all organizations have technical debt a backlog of

[00:24:48] technical issues that's both expensive and time consuming as a result of prioritizing short-term gains over building resilient products artificial intelligence when used by sufficiently skilled and knowledgeable individuals is showing the ability to exploit this technical debt at scale

[00:25:10] and at pace across the technology ecosystem as a result the ncsc expect there will be a forced correction which is the way he phrases it we're going to have a forced correction to address this technical debt across all types of software including open source commercial proprietary and software as a service this is why we're encouraging all organizations to prepare now for when a

[00:25:39] patch wave arrives a rush of software updates that will need to be applied across the technology stack to address the disclosure of new vulnerabilities all organizations must take steps to identify and minimize their internet facing and other externally exposed attack surfaces as soon as possible as we've argued for some time

[00:26:04] you should prioritize technologies on your perimeter and then work inwards covering cloud instances and on-premises environments by doing this organizations can reduce the risk posed by latent vulnerabilities when they become known and exploited by attackers where organizations cannot apply updates across their entire environment they should prioritize applying updates to their external attack surfaces

[00:26:34] where capacity extends beyond the external external attack surface organizations should prioritize critical security systems it's also important for organizations to realize that patching alone will not always suffice some technical debt may be present in end of life or legacy technology that's out of support and so cannot receive updates in such instances organizations will need to replace technologies

[00:27:04] or bring them back within support especially where it presents an external attack surface building on the principles contained within our vulnerability management guidance or guidance organizations should make plans to deploy software security updates quickly more frequently and at scale including across their supply chains we are expecting an influx of updates to address vulnerabilities across all severity

[00:27:34] and expect a number of updates and expect a number of updates and expect a number to be critical NCN NCSC recommend that or that and they have three where automatic secure hot patching is available that is patching that does not involve service disruption this should be enabled as a priority okay well that's you know not hard to do I imagine as the first where automatic updates are available including for embedded devices this should be enabled as a priority

[00:28:04] should be enabled to reduce the workload on support teams so yeah turn on automatic updates and go for it and third where neither of the above are available organizations will need to ensure that processes and risk appetites support frequent and and and scaled updating noted the operational trade offs around disruption and safety critical systems a risk prioritized approach such as the stakeholder

[00:28:33] the stakeholder specific vulnerability vulnerability vulnerability categorization system can be used to prioritize installing updates and then they continue however should a critical vulnerability be under active exploitation especially when affecting an internet facing system then it is essential to accelerate the update process organizations can refer to the NCSC's new guidance on responding to active exploitation of vulnerabilities for more information

[00:29:03] to summarize you should put in place a policy to update by default where you always apply software updates as soon as possible and ideally automatically this should be at the core of your update management process but we recognize it may not apply in some instances such as for safety critical systems or operational technology patching alone won't address the systemic problems that my he

[00:29:32] he writes my previous blogs have addressed i've appealed to technology producers and vendors to ensure systemic technical security debt is minimized by including where appropriate memory safety and containment technologies similarly for consumers and operators a focus on cyber security fundamentals to raise resilience and to reduce the impact of breaches should be a priority

[00:30:01] this includes adopting and fully implementing cyber essentials or the cyber assessment framework for organizations operating essential services such as energy health care transport digital infrastructure and government finally prepare for the patch wave now in conclusion the NCSC advise all organizations irrespective of size to plan and prepare for the vulnerability patch wave

[00:30:31] a good start is to plan for the NCSC's updated vulnerability management guidance for larger organizations we also recommend working to gain assurance from your supply chains both commercial and open source so that they're prepared to navigate any required response one thing that occurred to me as I was going through this is that in the name in the name of preparedness and this certainly applies to everybody

[00:31:02] in the UK and out this notion of gaining assurance from your supply chains I would say make sure that the providers of the equipment that you have on the edge which are under support which can obtain updates make sure they've got a greased path through into your email make sure that when they do notify you of

[00:31:32] updates that you have updates that you have updates that are available it doesn't get routed to some we'll get around to it you know next month during our monthly review process I would say you know given what we expect to have happening here over the next couple months make sure that that you that the communications inbound from the vendors that you are depending upon to have the most recent code running can get to you and large

[00:32:02] largely what I just shared from the NCSC you know it's a restating of what we already know right at the same time for many of the CIOs and CSOs and IT heads in organizations throughout the UK where this has rain a clear statement and posting such as this can provide the cover and the backup they may need to succeed in getting their organizations you know and the other C-square

[00:32:32] executives to take this to take this to take this to take this to take this to take this to take this to take this seriously to understand what is probably going to be happening shortly and as I was seeing this note from the UK's NCSC I realized that I hadn't seen anything from our own CISA in the US and that struck me as odd since the CISA we've all come to know would normally have been shouting about this from the mountaintops

[00:33:00] so I went digging to see whether maybe I had missed that statement which you know it seemed clear CISA should have made in the wake of the mythos revelations

[00:33:12] I found a report published two weeks ago on April 21st by Axios and it exactly addresses the question where CISA the reporting was posted as a scoop titled scoop CISA lacks access to anthropics mythos

[00:33:33] and Axios explained writing the cyber security and infrastructure security agency you know CISA does not have access to anthropics powerful mythos preview model even though some other government agencies are using it two sources tell Axios this matters because the country's top cyber defense agency tasked with helping to secure everything from banks to power plants and the power plant is a

[00:34:03] is on the outside looking in at a time when the industries it works with are deeply concerned about AI powered cyber attacks overwhelming their defenses

[00:34:17] anthropic decided against a public release of mythos this is axios bringing less informed readers up to speed anthropic decided against a public release of mythos due to its unprecedented ability to quickly discover and exploit security vulnerabilities instead anthropic provided it to more than 40 or 40 companies and organizations who are now testing it and working to shore up their systems

[00:34:43] CISA is not on that list earlier this month an anthropic official told Axios the company had briefed CISA and the commerce department on mythos capabilities the commerce department's center for AI standards and innovation has reportedly been testing mythos so they have it

[00:35:06] the NSA is also among the organizations using mythos despite the department of defense which oversees the agency having declared mytho anthropic is a supply chain risk unquote it's unclear if the ongoing turmoil within the agency during the second trump administration played any role in the agency not moving more swiftly to secure access

[00:35:33] the agency not moving more quickly to secure access spokespeople for cisa and anthropic both declined any comment for this reporting by axios they wrote the trump administration has spent the last year as we know reducing capacity at cisa instead of opting to give more policy influence to the white house's national instead of of of of that they have opted to give more policy influence to the white house's national cyber director

[00:36:02] and pushing some programs out to the state and local level so trying to you know distribute this instead of having it as centralized as it had been under cisa cisa's acting director a guy named nick anderson told lawmakers last week that the agency's resources are quote more limited than i would like unquote he said

[00:36:25] trump has proposed cutting as much as 707 million dollars more from the agency's budget in the upcoming fiscal year cisa has already lost more than a third of its workforce and millions of dollars in funding national cyber director sean karen cross is among the trump officials negotiating broader civilian agency access to mythos the treasury department has also been negotiating access

[00:36:55] sources sources tell axios that other organizations with access to mythos have predominantly been using it to find exploitable security vulnerabilities within their own networks and software security teams at critical infrastructure organizations have often looked to cisa to share threat intelligence across their sectors and determine how to prioritize their security strategies and as we know those critical infrastructure organizations

[00:37:23] have have have very much dependent upon cisa but also on that that that blanket of hold harmless so that they're free to disclose things they discover which is still a little bit in limbo

[00:37:39] so i heard i hadn't heard about this acting cisa director nick anderson so i checked him out and he appears to be eminently competent and qualified he's a decorated u.s marine corps veteran who served as cio chief information officer for navy intelligence and head of the office of intelligence surveillance and reconnaissance systems and technologies at the u.s coast guard

[00:38:07] he served in on active duty managing intelligence in iraq europe and africa and as a veteran of the operation iraqi freedom he served as principal deputy assistant secretary at the department of energy's office of cyber security energy security and emergency response where he led national efforts to secure u.s energy infrastructure he also served as federal cyber security lead and senior cyber security advisor

[00:38:36] to the federal cio at the white house office of management and budget so you know this guy's you know he's certainly competent to be on top of cisa um i've got no complaints with nick's background it appears what he needs is more resources and support uh and that cisa's lack of access to mythos is largely due

[00:39:00] to the as we're now calling it the war department's unfortunate feud with antrop uh anthropic anthropic made clear in 2025 at the time that it signed its contract with the pentagon that it did not want its ai technology to be used for mass surveillance of people within the united states

[00:39:23] or for fully autonomous weapons systems as we know then subsequently the department of war demanded that anthropic drop those restrictions and anthropic refused to do so they published a public statement explaining their position you know and regarding fully autonomous weapons they wrote frontier ai systems

[00:39:46] are simply not reliable enough to power fully autonomous weapons and without proper oversight fully autonomous weapons cannot be relied upon to exercise the critical judgment that highly trained professional troops exhibit every day anthropic offered to work with the department of war on r and d to improve the reliability of these systems but were turned down

[00:40:11] so after that in apparent retaliation and without any evidence the pentagon declared anthropic suddenly to be a supply chain risk uh and this is all very unfortunate since cisa should absolutely have access to anthropic's mythos preview hopefully the white house's national cyber director this sean karen cross who appears to understand

[00:40:36] the need will be able to make something happen you know it's clearly ridiculous to have one of the u.s's leading ai firms frozen out of the government because as secretary pete hegseth declared it is woke ai whatever that means in this context for the time being it appears that cisa is silent for purely political reasons this is really politics should not be in intrude into this

[00:41:04] at all and unfortunately very much has um i mean cisa is in the doghouse because of what happened in 2020 right chris krebs right and um now the white house is saying they want approval of all future ai models period they're about to draft a proposal that ai models can't be released without government approval this is exactly the wrong direction to take with this stuff well and i also did see that anthropic wanted to do a second round

[00:41:33] they wanted to expand their program by adding an additional 70 70 uh organ organizations that would have access to mythos preview and the white house said no is like blocking their ability to incrementally roll this out an incremental disclosure here is exactly what you want you get the core 40 they have a month

[00:41:57] with it now and then and then you widen the circle again and let another you know like like next tier have access to it yeah this is it it's a little infuriating because political motivation and what's the right thing to do from a security point of view don't necessarily coincide and and that's what you're seeing here and it makes us all less safe frankly yeah okay break time and

[00:42:24] then we're going to look at this uh newest linux uh local privilege escalation uh and look at how ai is reshaping the bug bounty business excellent well i could just sit back and relax because petaluma leo is going to take control this episode of security now brought to you by meter the company building better networks if you're a network engineer you know the headaches legacy providers inflexible pricing

[00:42:50] it resource constraints stretching you thin complex deployments across fragmented tools look your mission critical to the business but you're working with infrastructure that wasn't built for today's demands that's why businesses are switching to meter meter delivers full stack networking infrastructure wired wireless and cellular that's built for performance and scalability meter designs the hardware

[00:43:16] they write the firmware they build the software they manage the deployments they provide support meter offers everything from isp procurement to security routing switching wireless firewall they do cellular they do power dns security vpn sd-wann multi-site workflows all in a single solution meter's single integrated networking stack scales from major hospitals branch offices warehouses and large campuses

[00:43:45] to data centers even reddit the assistant director of technology for web school of knoxville said this quote we had more than 20 games on our campus between our two facilities each game was streamed via wired and wireless connections and the event went off without a hitch we could never have done this before meter redesigned our network with meter you get a single partner for all your connectivity needs from first

[00:44:11] site surveyed ongoing support without the complexity of managing multiple providers or tools one number to call meter's integrated networking stack is designed to take the burden off your it team and give you deep control and visibility reimagining what it means for businesses to get and stay online meter built for the bandwidth demands of today and tomorrow thanks to meter so much for supporting steve and security now and we invite you

[00:44:39] to go to meter.com security now and book a demo you'll be glad you did that's m-e-t-e-r meter dot com slash security now book a demo okay meter all right now back to steve all right steve on we go with security so the news late last week was at the discovery of another serious local privilege escalation discovered in the linux kernel

[00:45:06] and it had been there for a long time and yes before you ask it was found by an ai vulnerability discovery system operated by a security firm named theory uh they wrote quote an unprivileged local user can write four controlled bytes into the page cache of any readable file on a linux system and use that to gain root

[00:45:33] a simple 732 byte line nine line python proof of concept has been posted to github which immediately elevates any normal user to root and of course that's not something you want to leave unpatched so this important

[00:45:56] and uh uh i'm sorry this is important and linux distros the ones that are for sure uh known debian ubuntu and susi have immediately issued patches for the problem uh and uh overseers of many other distros have as well red hat initially said it was going to defer the fix but then later changed its guidance to indicate

[00:46:23] that it will be going along with the other distros and will be patching promptly uh the cve has been rated as high severity at a 7.8 out of 10 and of course it's only it's only only i mean still that's bad 7.8 uh which is you know it's as bad as it gets for a local privilege escalation but the attacker first needs to get into a non-root account where they're able to then execute this script in order to obtain

[00:46:53] elevation uh but on the other hand anybody who has local access to a machine also is able to use this so it's a complete breach of of linux security content you know account security um at the end of one of the reports of this i ran across the statement ai assisted vulnerability research recently prompted

[00:47:17] the internet bug bounty that's ibb the internet bug bounty program to suspend awards until it can understand how to manage the growing volume of reports i thought that was interesting and it was so i went hunting here's what i found about that near the end of march the internet bug bounty program

[00:47:42] which is run by hacker one paused their acceptance of new vulnerability submissions due to what hacker one described as an increasing imbalance between vulnerability discoveries and the ability for open source maintainers maintainers to remediate them and of course yes ai is the underlying driver of all this

[00:48:05] okay but let's for back we'll back up a little bit um recall that the internet bug bounty is a crowdfunded vulnerability reward program that was started 14 years ago back in 2012 and it's operated through the hacker one platform its its purpose and intent is to reward and thus incentivize

[00:48:30] independent security researchers to find and responsibly disclose vulnerabilities in widely used open source software the funding for the program comes from a consortium of major tech companies including facebook github shopify tiktok and others who all contribute to a shared bounty pool the underlying idea is that since

[00:48:54] everyone depends on open source infrastructure everyone should share in the cost of helping to secure it and the vulnerability discovery payout structure is pretty simple 80 percent of each awarded bounty goes to the researcher who reported the vulnerability with the remaining 20 percent being contributed to the open source project itself

[00:49:17] where the trouble was found to support you know its repair and and remediation so that helps to fund the the remediation work and and makes the program go it's been widely seen as a success having paid out more than one and a half million dollars since the program began but almost predictably ai has messed everything up hacker one stated quote

[00:49:45] the discovery landscape is changing ai assisted research is expanding vulnerability discovery across the ecosystem increasing both coverage and speed the balance between findings and their ability to fix them you know remediation capacity in open source has substantially shifted so the problem is being called

[00:50:13] triage fatigue and the trouble is not just the increased volume of reports that would be bad uh and what's interesting is it's not it's also not the signal to noise ratio the actual problem is the nature of the noise

[00:50:31] weirdly the quality of the noise while still noise has increased we all know daniel stenberg the creator of curl he expressed it this way he said more convincing crap is worse than obvious crap you can't dismiss it quickly you have to

[00:50:55] investigate it and you waste real time getting to the point where you can prove its nonsense at scale this stops feeling like a helpful external contribution model and starts to resemble something closer to a denial of service attack on the people who are responsible for security which is like yikes a consequence of ai so 31 years ago way

[00:51:24] turning the clock way back 31 years ago in 1995 netscape launched the first widely recognized paid bug bounty program offering to pay researchers back in 1995 for their responsible reporting of significant bugs which they discovered in netscape navigator 2.0 so they were really ahead of the game at that point of course they were

[00:51:54] also had a web browser that was ahead of the game and that model has been functioning vibrantly the notion of paying researchers for responsibly reporting bugs they find been functioning ever since so the notion that ai may be driving a fundamental change to this long-standing vulnerability

[00:52:19] discovery discovery and reporting model is important enough as i said at the top of the show to be a contender for today's main topic except that the idea of google going off half-cocked and adding an explicit ai interface for javascript in chrome that also needed ample discussion space today uh and we're going to cover mozilla's pushback

[00:52:42] against that at the end of the podcast but meanwhile the company aikido which is deep into automated vulnerability discovery as a business recently interviewed not only curls daniel stenberg who i just quoted but also casey ellis casey's the founder of bug crowd and as such is one of the people who helped establish and formalize

[00:53:07] bounties bounties for bugs starting back in 2012 aikido titled their report bug bounty isn't dead but the old model is breaking i'm going to share what he wrote and also what my intuition immediately suggests about the nature of the change so they wrote bug bounty has been a very hot topic lately

[00:53:32] we're seeing high profile programs go offline or fundamentally change the internet bug bounty one of the most important programs for open source programs is pausing submissions curl is removing payouts and node.js is removing its bounty entirely that's not noise that's signal we wanted to understand where bug bounty is actually heading

[00:54:01] so we sat down with two of the most credible voices on opposite sides of this conversation daniel stenberg creator of curl who's living the maintainer reality and recently halted bug bounty payments and casey ellis the founder of bug crowd one of the people who helped establish the model in the first place what we found was that the bug bounty model is at a crossroads and we're in the midst of a big shift

[00:54:31] before we get into where the model is headed let's take a step back and understand why it's been one of the most effective ideas in security over the last decade it all stems from the idea of letting the internet try to break your stuff before attackers do and it worked because it gave companies scale they could never hire as casey put it quote if you're trying to outsmart a global pool of attackers

[00:54:59] with someone working nine to five the math for that is wrong unquote they said that's the magic of bug bounty instead of relying on a handful of internal people you tap into a global pool of different skill sets different perspectives and different motivations all attacking your system in ways your internal team never thought of and that's without the significant overhead required to hire specialist experts

[00:55:29] internally and then work to keep them busy all this explains why bug bounties became fundamental to modern security programs what's changing now is it is not the demand for security it's the economics of how bug bounties operate ai has altered the balance and not in a good way finding bugs is now

[00:55:56] cheaper than ever writing reports is even easier and submitting them has become effectively frictionless meanwhile the cost of validating those reports and then actually fixing the issues has not changed at all those final two required steps validating and then fixing bugs remains as labor intensive as ever

[00:56:22] we are seeing this play out in practice there are three types of report submitters there are those companies that use a new approach for legitimate reports these are reports that use layered ai approaches that combine the strengths of multiple ai models guardrails orchestration and context such as aikido's own ai pen testing

[00:56:50] capabilities and aikido is of course plugging their own solution as we would expect them to on their own website but we know that anthropic also set up the their mythos preview system to do the same both are discovering and importantly verifying suspected vulnerabilities to produce much higher quality reports which in the case of mythos include proofs of concepts of exploits aikido continues

[00:57:20] enumerating these three classes of bug sources num so they they they said then there are individuals who escalate their research and report writing using ai as a tool and finally there are individuals who are able to upskill by virtue of these ai models they generate reports that seem technically plausible

[00:57:44] but are still completely wrong daniel described it perfectly and this is where he we we quoted him earlier saying more convincing crap is worse than obvious crap they said you can't dismiss it quickly you must investigate it right because it looks real and then you waste real time getting to the proof that it's nonsense

[00:58:09] at scale this stops feeling like a helpful external contribution model and starts to resemble something closer to a denial of service attack on the people responsible for security and the impact they write has been truly devastating the internet bug bounty program paused all new submissions because ai has dramatically increased discovery volume beyond what their maintainers can handle

[00:58:38] node.js lost its bounty when funding disappeared the reports still come in but the payouts are gone and curl removed financial rewards after being flooded with ai generated reports casey emphasized that this isn't a new problem it's an old one just massively accelerated he said we're doing stupid things faster with more energy

[00:59:06] bug bounty they write has always had an issue with being a level playing field one person submits a report and another person has to validate it

[00:59:15] that sounds equal on paper but in practice it has always been difficult for one person to keep up with validation even before ai existed now it's practically impossible we're now in a world where anyone can generate dozens of reports make them appear credible and submit them instantly on the receiving end however the constraints have not changed it's still humans reviewing triaging

[00:59:44] and making decisions open source has been the first to feel this impact open source is where the pressure has shown up first largely because it was already operating close to its limits most projects are maintained by small teams often volunteers with limited time and resources yet they underpin massive portions of the web of course we all think of that xkcd cartoon right with a little tiny block that's holding up this whole you know creaky

[01:00:14] infrastructure they said add financial incentives global participation and now ai generated submissions and the system is quickly overwhelmed the internet bug bounty program said it directly quote ai assisted discovery has shifted the balance between findings and remediation capability translation we're finding more bugs than we're able to handle

[01:00:42] so now the bounty is gone and yet the expectation of reporting remains but the question is is the way bug bounty programs have been used to effectively scale security teams that improve security posture still viable without financial incentives bug crowds founder casey ellis doesn't necessarily believe so every organization should have a vulnerability disclosure

[01:01:12] program because if you're on the internet people will find issues but not every organization is in a position to run a public reward driven bounty program in casey's words curl likely should not have had one to begin with casey said i don't think every organization should run a bounty program the curl program should not have been a bounty program in the first place unquote and yet daniel's experience shows something more

[01:01:41] more nuanced daniel views the bounty program as a success because it incentivized real scrutiny of the code he said quote i've always thought about it as a success because it's a great way to actually encourage people to scrutinize the code

[01:02:00] so what happens when you remove financial incentives you'd assume that when you remove financial incentives you'd get rid of ai slop but that you'd also reduce the likelihood of genuine vulnerabilities being disclosed however when curl removed the financial incentives something interesting happened the low quality

[01:02:28] ai generated noise largely disappeared daniel said quote we have stopped getting ai slop security reports instead we get an ever increasing amount of really good security reports submitted in a never before seen frequency which put us under serious load unquote okay so i'm going to interrupt here to mention

[01:02:58] that i have a question that i have a question that i have a theory about why that is back when discovering vulnerabilities required long hours of painstaking grueling work to step through and reverse engineer code it was no fun the only motivation and it needed to be significant was the promise of a big pot of gold payout at the end of that tunnel

[01:03:26] ii driven vulnerability discovery has changed that today ai makes bugs both fun and easy to find it allows less skilled users to participate thus broadening the bug hunter base and there are plenty of people who would sincerely like to give back and contribute until now they haven't been able to but now that

[01:03:56] Now they have the means. They don't need a monetary incentive. They truly want to help. I think it makes sense. Aikido continues with their report, writing, Instead of drowning in low-quality reports, maintainers are now dealing with a high volume of genuinely useful findings, many of which are powered by AI-assisted research. The barrier to entry has dropped, not just for bad reports,

[01:04:26] but for good ones too. But this creates a new kind of pressure. Even high-quality reports take time to understand, to validate, and to repair. And many of these good findings still fall into gray areas, bugs that may not meet security thresholds but still require some attention. The result is a sustained and in some ways increased load on already constrained teams.

[01:04:54] So in a strange way, the system has not been relieved. It's been refined. And this is where it gets interesting because while this is painful in the short term, it might actually be a step in the right direction. By removing financial incentives, we strip away a large portion of the noise. What's left is a signal that is on average of higher quality,

[01:05:21] more intentional, and more aligned with actual security outcomes. AI is lowering the barrier for researchers to do meaningful work. It's enabling more people to find real issues faster than ever before. That combination, less noise, more signal, but still overwhelming volume, suggests we're in a transition phase. The historical model is breaking under the pressure,

[01:05:50] but what's emerging underneath it might be better. This would look like a system where disclosure is expected, not incentivized. Rewards are more targeted, not broad. And the focus shifts from more reports to better outcomes. We're not there yet. Right now, we're in the messy middle, where the old model no longer works and the new one hasn't fully formed yet.

[01:06:19] But if this plays out correctly, we don't end up with less bug bounty. We end up with a more sustainable version of it. What we're likely moving toward is a model where vulnerability disclosure becomes a baseline expectation across the industry, rather than something optional or incentivized. Public bounty programs don't go away, but they become more controlled, more targeted,

[01:06:46] and more aligned with organizational maturity. AI will inevitably play a larger role in filtering and triaging the growing incoming volume of reports. It won't solve the problem entirely, but it will become part of how we manage it. We'll also see a shift in what gets rewarded. As automated systems become better at finding low-level issues, the value of those findings will drop.

[01:07:13] Instead, incentives will move toward a higher impact work, the kind that requires creativity, context, and a deeper understanding of the systems. That means researchers will increasingly focus on areas like chaining vulnerabilities, exploiting business logic, and breaking complex or emerging technologies where automation may continue to struggle. Okay, so think about this from the bounty provider's standpoint.

[01:07:43] Taking Curl as an example, Daniel terminates bug bounty payouts and observes an immediate drop in the total number of reports. But it's the bogus reports, predominantly, that disappear, not the useful reports that describe true problems. Given that, why would he ever resume bounty payouts? The internet bug bounty is likely to observe the same thing.

[01:08:12] As I noted, what appears to be happening is that bugs are now so much easier to discover, even fun to find and report, that it's no longer necessary to dangle a carrot. Actual human altruism, which, believe it or not, in 2026 still exists, is now sufficient to drive what once required the promise of payment.

[01:08:39] It'll take a while for this to percolate throughout the industry. But my prediction is, you know, that the 31 years of bug bounty programs we've had ever since Netscape first offered payment for reports of bugs in Navigator 2.0 is probably going to wind down over time. And the reason our programs are currently overwhelmed by good bug reports is that, unfortunately, they are very buggy.

[01:09:09] It's going to take a while. I mean, this is that new phase where AI is finding problems that were not, is truly finding problems that were not known to exist. Those will wash out of the system over the next six months or so. And then the volume of really good reports will necessarily drop because there won't be nearly as many bugs to be found, you know, in real time.

[01:09:37] And as AI then continues to check code before it goes out the door, we're not going to have new bugs introduced into the ecosystem. I think it's really interesting that potentially we are talking about a major shift in the way, you know, bugs are discovered. It won't nearly be as much for money moving forward as it has been in the past, Leo. Okay. You want to take a little break? I do.

[01:10:07] All right. And then we're going to look at a new product from Anthropic, which we might call Mini Mythos. Mythos Light. Mythos Light or Mythos Junior or something. Yes. And it's available to all Claude Enterprise users now. Okay. Oh, cool. You're watching Security Now, Mr. Steve Gibson. We do this show every Tuesday right after Mac Break Weekly. That's about 1.30 Pacific, 4.30 Eastern, 20.30 UTC.

[01:10:38] And you can watch it live if you really want the freshest version of it. Our club members get to watch in the Club Twit Discord. But there's also, of course, not TikTok, x.com, Facebook, LinkedIn, Twitch, YouTube, and Kik. So pick your platform, watch us live, or get it after the fact on Steve's site, grc.com, or our site, twit.tv. slash sn. We'll have more Security Now right after this. This episode of Security Now is brought to you by

[01:11:07] Bitwarden, the trusted leader in password, passkey, and secrets management. With over 10 million users across 180 countries and more than 50,000 businesses, Bitwarden is consistently ranked number one in user satisfaction by G2 and software reviews. With Bitwarden Access Intelligence, organizations can identify weak, reused, or exposed credentials and take action immediately while vault health alerts and password coaching surface risks to individual users

[01:11:37] in real time and guide them to fix issues on the spot, turning one of the most common causes of breaches into something visible, prioritized, and fixable. And now, Bitwarden is introducing the new Agent Access SDK, a powerful way for developers and teams to securely integrate controlled credential access into applications, automation workflows, and AI agents. It enables programmatic, just-in-time access to vault-stored credentials without exposing sensitive data, supporting secure use

[01:12:06] within modern development environments. Now, this release does not incorporate, very important, does not incorporate any AI functionality into the Bitwarden solution, and, maybe even more importantly, does not grant AI systems persistent or unrestricted access to your vault data. That's not. The point of the Agent Access SDK is it's a separate open-source development toolkit designed to enforce secure, human-approved, and scoped credential access

[01:12:36] for teams that leverage AI agents in their workflow. It's available now in an alpha phase, early days yet, for testing, but they want everybody to use it, not just every Bitwarden customer, but everybody using any password manager anywhere. The Agent Access SDK introduces a secure framework for how agents request, receive, and use credentials, helping define a model for safe credential interaction in agent-driven systems. And I love Bitwarden because they're giving it away. Any password company that wants to use it

[01:13:06] can use it. It's open. Bitwarden now enables Passkey login. I love this for Windows 11. Securely unlocking devices at the OS level. Of course, they have to work with Microsoft on this to provide native Passkey support. This will extend SSO to automatically log users into more apps, making credential management across devices more seamless than ever. And it works with Windows. Hello! Imagine never having to enter your password again. For those who want a lightweight option, Bitwarden Lite

[01:13:36] offers a self-hosted password manager designed for home labs, personal projects, or quick deployments with minimal overhead. And don't worry, Bitwarden's open source code, besides the fact that it's on GitHub, it's GPL licensed, you can look at it yourself. It's also regularly audited by third-party experts. It meets all the standards, SOC 2, Type 2, GDPR, HIPAA, CCPA, ISO 27001-2002. Of course, it is absolutely secure. Get started today with Bitwarden's free trial

[01:14:05] of a Teams or Enterprise plan, or get started for free across all devices as an individual user at bitwarden.com slash tweet. That's bitwarden.com. Slash. But we thank him so much for supporting. Security now. Okay. No more singing. Back to Steve. Yeah. I thought that was really interesting that the first bug bounty was 31 years ago. That's remarkable. That is really amazing.

[01:14:35] Yeah. Yeah. Yeah. It's a program that has worked, but to me, it really makes sense if we have, I mean, finding bugs and contributing, you know, giving back, we know that there is a lot of altruism out there in the world. You know, people who would like to contribute, you know, but, and so, you know, spending some time working with a security and AI enhanced vulnerability finding system. I think that makes

[01:15:05] just total sense. Well, that's one thing I don't think Netscape could have anticipated 31 years ago that AI would suddenly be finding all these laws. for the intervening 30 years, it's been fabulously successful. It's worked really well. Millions of dollars, millions and millions of dollars have been paid out to, you know, authentic bugs and vulnerabilities that have been found. So, the systems have working now, we have AI able to pick up that burden and carry it forward. There's another category

[01:15:35] of people who are out of work, bug bounty finders. Well, that's true. It's not a, probably not a career path. Although, if you are expert in running AI discovery, then you've got a new way to make some money. Well, actually, that's a good point. That Linux copy fail flaw, they found it not with the AI solely, but because a very smart security researcher pointed the AI at a specific direction and said, hey, I wonder if this is a problem. And then the AI

[01:16:04] was able to go a little step further. So, it was really a partnership. Exactly. Yeah. Okay. So, we can add, well, it's apropos of the changes being wrought to AI vulnerability discovery that we have Anthropix announcement late last week of Claude Security, which is now entering public beta for their enterprise customers.

[01:16:33] We could think of it as Mythos Jr., and that's sort of how they're casting it. Here's what Anthropix posted about this. They said, Claude Security, which is what they're calling it, Claude Security is now available in public beta to Claude enterprise customers. AI cybersecurity capabilities are advancing fast. Today's models are already highly effective at finding flaws in software code. The next generation will be more capable still, and will be

[01:17:03] particularly effective at autonomously exploiting these flaws. Now is the time for organizations to act to improve their security, preparing for a world in which working software exploits are much easier to discover. Recently, we made Claude Mythos Preview, which can match or surpass even elite human experts at both finding and exploiting software vulnerabilities available to a number of partners

[01:17:33] as part of Project Glasswing. But our cybersecurity efforts go beyond Glasswing. With Claude Security, a much wider set of organizations can put our most powerful generally available model Claude Opus 4.7 to work across their code bases. Opus 4.7 is among the strongest models available for finding and patching software vulnerabilities and for discovering

[01:18:03] complex, context-dependent issues that might otherwise be missed. Claude Security, previously known as Claude Code Security, has already been tested by hundreds of organizations of all sizes in limited research preview, helping teams scan their code bases for vulnerabilities and generate targeted patches. Their feedback has shaped today's release, which makes Claude Security available to all enterprise customers.

[01:18:33] It comes with scheduled and targeted scans, easier integration into audit systems, and improved tracking of triaged findings. No API integration or custom agent build is required. If your organization uses Claude, you can start scanning today. Opus 4.7's capabilities are also being brought to cyber defenders through Claude's integration into software tools that many enterprises already use.

[01:19:02] Our technology partners, including CrowdStrike, Microsoft Security, Palo Alto Networks, Sentinel One, Trend AI, and Wiz, are embedding Opus 4.7 into their tools. In addition, services partners like Accenture, BCG, Deloitte, Infosys, and PwC are now helping organizations deploy Claude integrated security solutions. We're entering a pivotal time

[01:19:32] for cybersecurity. AI is compressing the timeline between vulnerability, discovery, and exploitation. We believe the right response is to make sure defenders have access to frontier capabilities in the ways most accessible to them through Claude directly and through our partners. Claude security can be accessed directly from the Claude. AI sidebar or at Claude.ai slash security. To begin,

[01:20:03] select one of your repositories or scope to a specific directory or branch. Then start a scan. While scanning, Claude reasons about code much like a security researcher. Rather than finding vulnerabilities by searching for known patterns, Claude seeks to understand how components interact across files and modules, traces data flows, and reads the source code. Once complete, Claude provides a

[01:20:32] detailed explanation of each of its findings, including its confidence that the vulnerability is real, how severe it is, its likely impact, and how it can be reproduced. It also generates instructions for a targeted patch, which users can open in Claude code on the web to work through the fix in context. It just sounds fantastic. Over the past two months, we've refined Claude security in line

[01:21:02] with what we learned from its use and production across hundreds of enterprises. Specifically, we've seen that detection quality is paramount. Teams have told us that high-confidence findings are what really accelerates security work. Claude security's multi-stage validation pipeline independently examines each finding before it reaches an analyst, which drives down false positives, and

[01:21:31] Claude attaches a confidence rating to every result. This means that the signal that reaches the team is worth acting on. Time from scan to fix is the metric that matters. Early users pointed to this consistently with several teams going from scan to applied patch in a single sitting, where instead of days of back and forth between security and engineering teams,

[01:22:00] teams want ongoing coverage, not one-off audits. We've added the option to schedule scans so teams can set a regular cadence around reviewing and acting on findings. With this release, we've also added the ability to target a scan at a particular directory within a repository, dismiss findings with documented reasons so that future reviewers can trust prior triage decisions, export

[01:22:29] findings as CSV or markdown for existing tracking and audit systems, and send scan results to Slack, JIRA, or other tools via webhooks. Okay. Given the windup we've seen from Mythos over the past month, and the way they describe this, I cannot imagine why any organization whose software might contain

[01:23:00] external exploitable vulnerabilities or bugs would not be jumping on this with all possible speed. As I noted a few weeks back, an organization's own internal software is only closed source to the outside world. To the organization, their own source code is wide open, and there is now an emerging tool that stands a good chance of

[01:23:29] discovering bugs that have until now escaped notice. I would love to be a fly on the wall in the software development dungeons, and of the world's enterprises, you know, watching their reactions to what they begin seeing from this clawed security. Basically, this is anybody is now able to purchase a mini version of Mythos. And I would argue

[01:23:56] that if Mythos is even better at finding bugs, there's still benefit from running this Mythos Jr., you know, clawed security, over your code base to see if it's able to find something. Certainly, if it can, Mythos would. Mythos may be available in the future. Well, we presume it will be at some point, but you have this now. So, I think this is, you know, a maybe

[01:24:26] in retrospect, a predictable evolution on Anthropics' part, but certainly welcome. I do this anyway. I mean, I don't have Mythos or anything like it. I just have the regular, you know, clawed opus 47 and chatgbt55. And I always say, in fact, I often have chatgbt check claude's work and claude check chatgbt's work. Yep. Cross model. And I frequently say, let's do a security audit on these

[01:24:56] repositories. I mean, that by itself is useful. I found all sorts of stuff. I've also had security audits on my systems, and it's found errors and corrections there too. It's, you know, just the regular models are useful. I can't wait to see how Mythos does. Yeah. And I think from what they've said, what this adds is the ability, for example, to schedule scans so that your engineering software development team, they're just working along.

[01:25:26] And then periodically the code base is given a scan and a check to see if anything significant has been found. That's a great idea. I think that's brilliant. Okay. So, OpenAI announced that they've decided, I was very impressed by this, I'll just say ahead of time, to make account login security a selling point.

[01:25:56] Their posting was titled, Introducing Advanced Account Security. And they explain, today we're introducing Advanced Account Security, a new opt-in setting for ChatGPT accounts. And you've got it now, Leo. Designed for people at increased risk of digital attacks, as well as for those who want the strongest account protections available. It brings together a set of heightened

[01:26:25] security measures that help safeguard against account takeover, while making those protections easier to activate in one place. Once enrolled, Advanced Account Security protects users in codecs as well. They wrote, people are turning to AI for deeply personal questions and increasingly high-stakes work. Over time, a ChatGPT account can

[01:26:54] hold sensitive personal and professional context and sit at the center of connected tools and workflows. For some people, like journalists, elected officials, political dissidents, researchers, and those who are especially security conscious, the stakes are even higher. This effort is part of our broader cybersecurity action plan to broaden access to the technologies that can help protect communities, critical

[01:27:23] systems, and our national security. We want users to have the controls to make the security and privacy choices that are right for them. At the same time, we want to ensure users understand, and here's a critical part, understand that the increased protection of advanced account security comes with an increased responsibility for account recovery. And so, now they get specific. Advanced account security brings

[01:27:53] together a series of controls that strengthen sign-in protections, tighten account recovery, reduce exposure from compromised sessions, and give users more visibility into account activity. It's available to opt into in the security section of users' ChatGP accounts on the web. Protection applies to both ChatGPT and Codex accounts that are accessed through that login.

[01:28:22] So, we have stronger sign-in methods. Advanced account security requires pass keys or physical security keys, while disabling password-based login, helping make phishing resistant sign-in the default for people who need it most. So, password-based login, gone. You must use a pass key or

[01:28:51] physical security key. Next, more secure account recovery. If a user's email account or phone number is compromised, an attacker may try to use one of them to gain access to their ChatGPT account via email or SMS-based recovery. We know that, right? They say, to reduce this risk, advanced account security disables email and SMS

[01:29:21] recovery and requires stronger recovery methods, backup pass keys, security keys, and recovery keys. Because account recovery is restricted to these more secure methods, OpenAI support will not be able to assist with account recovery for users enrolled in advanced account security. Again, with truly heightened security comes

[01:29:51] much more responsibility. You know, they're saying, we can't help you because you don't want bad guys posing as you to get help from us either. So, you know, now we're talking. You know, hopefully this sort of much more responsible security becomes more commonplace. security. You know, the only gotcha, of course, is that it makes users entirely responsible for the security

[01:30:20] they claim to want and to cherish. By explicitly removing email and SMS account recovery loops, the most common phishing and other attacks will be thwarted. You know, but, you know, I can see in the case of chat GPT login, this makes sense. OpenAI explains two additional security enhancements, writing, shorter session management. Sign-in sessions

[01:30:49] are shortened to reduce the window of exposure if a device or active session is compromised. Users also receive alerts when there's a login to their account, and they can review and manage the active sessions across the various devices they're signed into. And finally, automatic training exclusion. People working with especially sensitive information may opt to not have those conversations used for model training.

[01:31:19] With advanced account security enabled, that preference is automatic. Conversations from those accounts will not be used to train our models. They finish saying, using physical security keys such as YubiKeys is one of the strongest defenses against phishing. To make that level of protection easier to access, we have partnered with YubiCo,

[01:31:47] a leader in hardware-based authentication and account protection, to offer our users preferred pricing on a customized bundle of the best-in-class security keys. The YubiKey C-Nano is designed to stay in your laptop. You stick it into a USB port and its head basically tucks out a little bit so you're able to touch the little gold

[01:32:17] metal convex head of it in order to authenticate. And they said, for low-friction daily authentication and the YubiKey C-NFC for backup and use across laptops and mobile devices. We are launching this partnership as part of advanced account security, but the bundle will be available to all eligible users in their security settings on the web,

[01:32:46] so more people can adopt stronger, phishing-resistant account protection. Users will also be able to use other FIDO-compliant security key or use software-based pass keys. So I logged into ChatGPT, which I am no longer using as my daily driver. You know, I've switched to Claude after appreciating how confused an AI's context window would become if I were to share it with my wife, Lori.

[01:33:16] So now we each have our own. Once I was there in ChatGPT, sure enough, the security panel of the settings dialog now has many new features, and I think this is great. I expect to see this sort of enhanced security become a standard feature to more rigorously, I mean, like across the industry, to more rigorously protect the potentially very highly sensitive