DigiCert's latest security mishap triggered not just a scramble behind the scenes, but a cascading crisis that briefly wiped trust from millions of Windows systems. Find out how a single support slip, followed by Microsoft's heavy-handed response, left critical infrastructures exposed.

- The FCC decides router firmware updates are useful.

- Netgear applies for and gets a full FCC pass.

- AI uncovers a 21-year old critical FreeBSD RCE.

- What was behind that Let's Encrypt outage.

- AI model repositories are overflowing with malware.

- The CISA 2015 info-sharing act is being renewed.

- Edge leaves ALL usernames and passwords in the clear.

- An examination of DigiCert's breach and their response

Show Notes - https://www.grc.com/sn/SN-1078-Notes.pdf

Hosts: Steve Gibson and Leo Laporte

Download or subscribe to Security Now at https://twit.tv/shows/security-now.

You can submit a question to Security Now at the GRC Feedback Page.

For 16kbps versions, transcripts, and notes (including fixes), visit Steve's site: grc.com, also the home of the best disk maintenance and recovery utility ever written Spinrite 6.

Join Club TWiT for Ad-Free Podcasts!

Support what you love and get ad-free audio and video feeds, a members-only Discord, and exclusive content. Join today: https://twit.tv/clubtwit

Sponsors:

[00:00:00] It's time for Security Now. Steve Gibson is here. The FCC has backed down a little bit and that's good news for router manufacturers. AI has found a 21-year-old critical flaw in, well, the most secure operating system I know about. We'll talk about the Let's Encrypt outage and then how DigiCert responded to its recent breach. Steve said A plus to DigiCert. That's coming up next on Security Now.

[00:00:29] Podcasts you love. From people you trust. This is TWIT. This is Security Now with Steve Gibson. Episode 1078, recorded Tuesday, May 12th, 2026. DigiCert does it right. It's time for Security Now, the show where we cover your security, your privacy and how computers work and maybe a little sci-fi and vitamin D thrown in with this guy right here.

[00:00:58] Because basically, this is the show where Steve talks about the stuff he cares most about. Hello, Steve Gibson. Which I'm not sure if it was self-selecting, but our listeners tend to agree. Yes. So they're like, I mean, I'm getting vitamin D email and sleep supplement questions. And so I know that our listeners are focused. And Steve, we should add coffee to this. Because Steve is, as we well know, a five-shot venti latte drinker. There it is in the giant mug.

[00:01:28] I had some wonderful coffee in Kona. They grow Kona coffee on the side of the volcano. That's how they named it, actually. Yeah. Yes. The name came from the city. But it is amazing coffee. So much so that I bought a large amount of it to bring back. It's just so delicious. No, I mean, good coffee is just in a class by itself. But I don't think you would like Kona coffee. Because I remember we talked about this.

[00:01:58] In fact, this came to mind as I'm drinking it. Because of where it's grown in the volcanic soil, it has less caffeine and very little bitterness, very little bite. And I remember you like the bite. Yes. In fact, decaf lacks the bite. And so it's like, eh, seems a little, why bother? Kona coffee is almost like tea. It's very smooth and delicious and a little bit less caffeinated.

[00:02:24] So, yeah, I thought maybe he wouldn't like it that much, come to think of it. So I didn't send you any. Okay. That's great. I'd rather have salt. Very expensive. I'll send you salt in my way. I do have some salt from Salt Hank that's coming your way. As soon as I figure out how I could package those glass jars in a way that they don't break. We had some special salt made for Steve and his wife. They have a fetish for my son's salt. Yeah, that's right. Okay.

[00:02:52] So we are at episode 1078 for May 12th. And I teased this last week. The news was just breaking and I didn't have the whole story. And actually they didn't have the whole story. I'm talking about a, an interesting problem that DigiCert, the industry's now by far number one certificate authority suffered.

[00:03:22] Um, I titled today's podcast, DigiCert does it right? Because I, and a lot of other industry experts have singled this, their reporting out as this is the way you do this. If you suffer a breach, how do you disclose?

[00:03:44] And anyway, so we're going to, I want to really, you know, give them some props for like the job that they've done and take a look at what they did to just, you know, both give them credit, but also to show the, in, in some detail, this is the way it's done. Right. So many people do it wrong. We should, we should definitely. Well, yeah, exactly.

[00:04:09] And especially we've covered other CAs that have lost their CA-ness because the, of, you know, trying to like, Oh no, that really was. Oh, well, Oh, you found that. Okay. Well then we'll have to talk. I guess we'll have to, you know, yeah, it's just, you know, wrong. So, you know, props to them. Um, we're going to talk about the FCC, however, uh, deciding that firmware updates might actually be a good thing.

[00:04:36] So let's rethink that policy. Uh, net gear, uh, speaking of the FCC is the first. And as far as I know, so far only router manufacturer to get a full pass on this ridiculous year. Eero just got one yesterday. Oh, good. So there's two now. Good. Uh, also. Uh, I has uncovered a 21 year old critical. Remote code execution vulnerability.

[00:05:06] And this one is trivially implemented in one of the most secure Unix's ever my favorite. And the one I use free BSD. Uh, and I should also mention it. I didn't get it in the notes for this week, but I'll be talking about it next week. Google has announced that they have uncovered the first AI generated zero day.

[00:05:30] So we now have a confirmed example of AI as we have been worried about, uh, even pre mythos. This is not, you know, cause presumably the bad guys didn't have access to it. Although we do know that there were some leaks of, of mythos access, but this has been the concern. So it's beginning to happen. Um, there was also a brief let's encrypt outage.

[00:05:56] We have a, uh, another example of a company doing the right thing. Uh, turns out. So there are now some reports, not surprisingly also of AI model repositories overflowing with, I'm not sure you call it malware mal prompting. I don't know what mal it's bad.

[00:06:18] So mal, but anyway, that's, that's a thing now, uh, in addition to, you know, NPM and PI PI and everything, all the other open repositories that we've talked about. Uh, the, uh, they, the, it, it looks like CISA, it's C I S A, although it's a decade old.

[00:06:39] It's that, uh, agreement that was signed in 2015, which allows, um, uh, private companies to share. Cyber security things with the government without fear of reprisal looks like that was, um, uh, well, it was temporarily extended. It looks like it's going to be made permanent.

[00:07:00] Uh, we have some very distressing news about the Edge browser and what was found by someone and has now been confirmed not only by people online, but some of my own, uh, news group participants who have done this and found all their usernames and passwords in the clear. So we'll talk about that. And then we're going to get into a deep look at how you do this, right?

[00:07:26] If you are a, a company with serious responsibility for user security, taking responsibility and documenting it, uh, and DigiCert did that. And I'm saying that even though I've wandered away from them, as we know, because of their pricing, but still they're, you know, they're it. So I think a great podcast for our listeners and of course, a fun picture of the week.

[00:07:50] Which I have not looked at, but will at some point with you after this word. Probably then. Yes. From, from our sponsor. We will get to that picture of the week in just a moment. Oh, first. And, and to those who are seeing video. Yes. Leo has an apparently white shirt on. Oh no, but I have to reveal. For the first time in ever. Oh, there was a weird blue stripe hidden behind the microphone.

[00:08:20] It's a Tommy Bahama Hawaiian shirt. Some foliage down below. I guess it does look like a white shirt, isn't it? I got, I might have to retire this from the collection. We're just so used to the collection of orange slices. And I'm going to go change my shirt after this break. Uh, I'll wear something crazy and kooky. I promise. Uh, I apologize for not. Uh, our show, our show this hour brought to you by cyber hoot.

[00:08:47] If you have ever rolled out security awareness training and thought this feels more like a compliance exercise and actually teaching security. Well, you're not alone. It kind of is in many cases, many times, you know, platforms try to catch users making mistakes. They send fake phishing emails to inboxes. Then they kind of wait for someone to click and then they pounce on them and assign training after the fact.

[00:09:15] And let's face it, that could feel, uh, you know, punitive and people don't learn when they're being punished. Honestly, it doesn't change behaviors to do it that way. That's why cyber who does it different. They take a different approach. Instead of trying to trick your users into clicking cyber hoots, hoot fish focuses on teaching them first, not in their inbox after a mistake and click, but in their browser through a trusted, realistic phishing simulation.

[00:09:43] The goal is simple to build instinct before that click ever happens. You're not trying to trap your users. You're, you're, you're trying to teach them. And by the way, it really works. I've watched Lisa go. We've been using, we've been using cyber hood and I've been watching Lisa go through the training. It really is great. Cyber hood is completely automated training campaigns, reminders, escalation to managers, and reporting. That's all handled for you. So instead of, you know, chasing users, you get clear visibility into who's completed,

[00:10:12] what and where your risks are. And here's something interesting. Cyber hood also adds a light opt in social layer. This is kind of cool. Users can connect with coworkers and engage in friendly competition around the training process and, and how they're doing right. So this isn't forced gamification. It's just enough to increase participation without turning it into a gotcha system. And users love it. It's fun. A little competition adds a little spice, right?

[00:10:42] G2 reviewers also love it. They rate cyber who I, this is, I've never seen a rating this good 4.9 out of five stars. What the G2 crowd repeatedly praises ease of use, high participation, brief content, quick, non punitive training, full automation, and strong support. If your organization is ready to stop punishing people for being human and start actually building

[00:11:08] cyber smart employees, head to cyber who dot com slash security. Now. And by the way, please use the code security. Now at checkout, you'll get 20% off your first year. So again, that's C Y B E R H O O T. Dot com slash security. Now. And that promo code security. Now gives you 20% off your first year. Just remember to always laugh, learn, and hoot up. By the way, the, some of the awards are the cutest little owls.

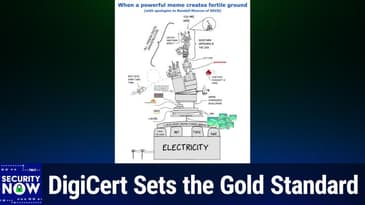

[00:11:38] I just love it. Cyber hoot.com slash security. Now don't forget the offer code security. Now, now back to our man of the hour, Mr. So I gave our picture of the week a title. I titled it when a powerful meme creates fertile ground. A powerful meme creates fertile ground, fertile ground with apologies to Randall Monroe. Oh yes. Of course. K CD.

[00:12:08] All right. Well, let me, uh, let me pull it up and we'll look at it together for the first time. This is what you begat. So you remember his famous X key K CD cartoon with the blocks all teetering on one little block that's written by a single developer. Oh my God. This has gotten a little more complicated. Wow. So this is an updated version of this ridiculous set of towering blocks. Uh, this is hysterical. It's wonderful.

[00:12:39] I'm not sure if those are supposed to be tombstones there at the very bottom that with Linus Torvalds, IBM TSMC and K and R, you know, clearly K and R is Kernighan and Richie. Um, but at the very, very top, we see a little tiny little black speck that says you are here. And then we have a zoom in window with a little guy there that's whose thought bubble is WTF.

[00:13:05] Uh, and so there's also some guy who's like sort of teetering on the edge that is, is labeled web dev sabotaging himself. Uh, we've got that all sitting on a web SM and there's a V8 engine and it's all bracketed saying something happening in the web. Then a bracket on the other side that is more encompassing, uh, titled all modern digital infrastructure. Referring to all that.

[00:13:33] We've got rust devs flying in from the left. It says doing their, doing their thing and doing a loop de loop and then slamming into the Oracle block. And it looks like maybe JWT Java web tokens or above that. JVM. That's the Java virtual machine. Okay. JVM. And it's teetering. The reason I know that's teetering on Oracle, which owns it. Right. Oh boy. Perfect. Perfect. And I love the angry bird coming in from right. The right.

[00:14:01] And it's titled whatever Microsoft is doing is the angry bird. Apparently going to slam into this whole thing. Uh, crowd strikes got its little block. I don't know what left hyphen pad is. Leo. I don't know what that means either. Yeah. Anyway. So also wedged in. Now we have a new thing. We have what would be a car Jack. If it were, if, if it were going, going, going to be a. A parallelogram and sort of raise the whole thing.

[00:14:31] Instead, this is a, a, a screw based wedge, which is expanding. Uh, and that's of course labeled AI. Cause it's threatening to teeter the whole thing off its axis. It caused it to all come crashing down. We have that one C 99 project based on behavior of undefined behavior and not to be left out.

[00:14:56] We've got a cloud flare block and a set of four of the lava lamps, which of course cloud flare made famous for their, for their true random number generating, uh, system based on the wax in the, in the lava lamps. I also like those, those bump bunch of little blue squares in the lower, right. I had to figure out what I was looking at. I realized it's a shark biting an undersea data cable.

[00:15:24] Uh, it's, which of course is going to cut off the whole chunk of the internet. If, uh, if the, if it chewed through the, the, the cable laying on the ocean floor, live curl, not to be forgotten is there a WS, uh, uh, C developers writing dynamic arrays. And we are reminded that all of this is driven by made possible by electricity.

[00:15:51] So they, the, the underlying foundation is a big block of electricity with some electric poles coming in to feed it. Very, very funny. This is not only funny, but really pretty true. There's a lot of, yeah, this is all the stuff we've talked about through the years on the podcast. Yeah. Wow. Very nice. So a fun picture. And thank you to our listener who sent it to me. Okay. So there's news on the residential router front.

[00:16:20] Apparently someone at the FCC got a clue, or at least they listened to somebody who actually knew something about cybersecurity because last Friday they announced a reversal to their previous no updates for you policy.

[00:16:36] Tom's hardware covered this and wrote the federal communications commission announced on Friday, May 8th through its office of engineering and technology, the OET that it was extending temporary waivers, allowing certain foreign produced drones, drone components, and consumer routers to continue receive, you know, all those bad things that we think are coming from China to continue receiving software. And firmware updates.

[00:17:15] So here we are. We are. Now, we are. We are. We are. We are. We are. We are. We are.

[00:17:51] We are. We are. We are. We are. We are. We are. We are.

[00:18:23] We are. We are. We are. We are.

[00:18:48] updates that maintain device functionality, patch vulnerabilities, and preserve compatibility with changing operating systems and network environments. But still no new models, but oh, you can have all the firmware updates you want. Which again, what? The agency argued that the public interest would be better served by allowing these limited updates rather than

[00:19:12] freezing software support entirely. Okay. In other words, duh. Anyway, Tom's ads, the waiver does not reverse the broader restrictions or remove the devices from the covered list. It applies only to already authorized products and the software and firmware related changes intended to maintain safe and secure operation. Manufacturers must still comply with other FCC

[00:19:42] requirements governing permissive changes and equipment certification. Okay. So as I said, given January 1st, 2029, that allows for nearly an additional two years of updates to existing routers, which yeah, that's certainly good news. But of course the entire thing remains unspeakably ridiculous

[00:20:10] because control over a router's firmware is all anyone needs to turn that previously authorized and approved, because it existed back, you know, a year ago, router, uh, into an internet bandwidth weapon. The hardware doesn't need to change model does model number doesn't need to change. It's all firmware.

[00:20:36] So either you trust the foreign manufacturer of a router or you don't. And if you do, then there's no problem. And if you don't, then limiting updates, like allowing any updates, but you know, limiting them to the original March 1st, 2027 deadline, even that one year is of

[00:21:01] absolutely zero benefit since you've given them under the assumption that they have malicious intent one full year to cook up some new sneaky malware update with which to infect any routers that may be updated during the period of that year. In other words, none of this has ever made any kind of sense.

[00:21:24] As we saw, uh, last week, CISA, our CISA agency has been effectively neutered. Uh, you know, our, our agencies appear to now be staffed and run by people who will not push back against policies that they know are clearly wrong. So this sort of nonsense is what results. It's difficult to imagine this could have happened, uh, back when, you know, CISA was at its original strength and staffing,

[00:21:54] but, you know, cause there would have been people there would have said, what? No. I mean, there, they, one of the reasons we liked CISA so much was that they had taken such responsibility for getting into the, you must update your stuff business and pushing that out to all of the government agencies over which they had any oversight. Apparently that's not what we do

[00:22:19] anymore, even though it was the right thing to be doing then one piece of good news. And Leo, you added a second piece of good news, uh, for the, for those who like and use Netgear routers. And now the Eero products is that even before the addition of those additional two years of firmware updates, Netgear, and now we know Eero had announced that it had received, they had received now the FCC's

[00:22:48] conditional approval for their routers. This meant that none of those ridiculous FCC imposed restrictions would affect any of Netgear's and Eero's router products, not those already sold and not any current or future models. It's like this, you know, this membership on this, uh, list just

[00:23:16] doesn't exist. So they get a full pass, uh, which also includes their right to update their firmware with abandon anytime they feel the need. So yay. Okay. So, uh, we've heard again from the guys at aisle security, remember their name, AI S L E. Uh, you know, they're that commercial group who've been

[00:23:43] using their own AI as their name suggests to find flaws in software and who were somewhat annoyed. As we discussed a couple of weeks ago by all the hoopla that Anthropic was able to generate around mythos. The headline of last Thursday's posting of theirs was aisle discovers CVE 2026, four 25, 11,

[00:24:10] a 21 year old free BSD remote code execution vulnerability. So AI was used. This thing has been in, this has been a problem in free BSD for 21 years. It actually, as we'll see in a second, actually inherited it from open BSD when it, when it went, when the open source, one open source project free BSD

[00:24:36] grabbed another chunk of code from a different open source project, open BSD. And with it came a serious problem. So this was, this posting of aisle was written by the discoverer of this flaw. He writes free BSD is often described as one of the most secure operating systems in the world

[00:25:00] with its reputation arising from its high quality networking stack, deliberate engineering, and a philosophy of security through simplicity. Free BSD history and usage are remarkable. It powers Netflix open connect infrastructure, Sony's PlayStation OS, part of Nintendo's switch OS,

[00:25:23] Yahoo's back-end services, NetApps storage systems, Citrix's Netscaler, has long helped form the software base of major networking platforms, Cisco, Juniper, and so on. WhatsApp's back-end services historically, and is now the focus of a substantial foundation effort to make it work better on modern

[00:25:47] laptops. And he writes for full disclosure remains this author's personal operating system of choice. And to that, I will just add that it's also my own Unix OS of choice. As I've often mentioned, it underlies the PFSense personal firewall router system. Uh, and for me, it runs our DNS and our news

[00:26:12] groups. So, you know, that's the Unix that I chose. Uh, uh, uh, you may remember Leo years ago, a guy named Brett Glass was, uh, active in the early days of the PC, uh, industry. Uh, and Brett knew his way around Unixes. And I remember having a conversation with him and saying, so what do you recommend? He said,

[00:26:34] free BSD period. There are other BSDs. There's net BSD as an open BSD, but free BSD is the one you like. I do. Uh, and it has had some, some desktop laptop, um, orientation and some, a lot, I think it's $750,000 from the, uh, some foundation affiliated with free BSD are making a serious push to make it more,

[00:27:03] uh, desktop and laptop friendly, adding a lot more wifi drivers and, and, and making a lot more hardware agnostic. So it's, it's still alive and kicking anyway. I'll continue saying I'll discovered a remote, a remote command execution vulnerability in free BSDs DH client, uh, that is trivially

[00:27:28] weaponizable and wormable yikes by any system on the same local network as the free BSD system. The vulnerability first entered free BSD in the 2005. That's the year we started this podcast 2005, really at best 21 years old. And so is it. So is this podcast 2005 release of free BSD 6.0

[00:27:54] when open BSD's DH client was imported and laid dormant. That is the vulnerability did until discovered by aisle. The vulnerability also affected open BSD until 2012 when that operating system deprecated DH client hyphen script completely indirectly fixing the vulnerability, but free BSD didn't.

[00:28:20] The initial flaw was identified by aisles AI based source code analysis pipeline, and then investigated by our triage agents, Joshua Rogers. That's actually the author. So he's referring to himself of aisles offensive security research team trace the relevant code paths, established the full security impact and developed a proof of concept demonstrating a complete local network to root exploit

[00:28:50] chain. Free BSD is adding key improvements to laptop support, including greater Wi-Fi support. So the attack surface here becomes even more relevant to everyday systems. A malicious wireless access point, or in some cases, another attacker just sharing the same Wi-Fi network able to spoof DHCP, can target the exact DHCP path that

[00:29:18] almost every wireless free BSD system will rely upon. Imagine you're the author of this post who runs free BSD on their laptop, as this guy does. You're at a coffee shop, airport, or hotel. And as soon as you connect your free BSD equipped laptop to the Wi-Fi, your whole system is hijacked in secret. Imagine you have a PlayStation whose OS is locked down from any unofficial access.

[00:29:47] Only to be hijacked by connecting to a network. In other words, this vulnerability not only affects servers, but any free BSD machine that connects to a network using DHCP, which is the default setup case for almost everybody.

[00:30:06] The vulnerability was a logic flaw that allowed attacker-controlled protocol data to be persisted into a trusted configuration-like format without proper sanitization, then later reinterpreted in a privileged execution path. That is exactly the kind of bug IELS' autonomous security platform is built to find.

[00:30:32] And get how he's built to find. And get how he signs off here. He says, like our recent findings in OpenSSL, Firefox, LivePNG, and Amazon's crypto stack, this result came from disciplined engineering and end-to-end analysis, not model mythology. Oh, please. Okay.

[00:31:26] D-H-I-N-D-H-C-P-Replay, D-H-C-P-Replay, containing code or commands and code that it will execute. As Joshua, who authored this write-up, noted, this could have been extremely serious if it had not been found by the good guys. At some point, we may see those who claim that AI-enhanced software vulnerability discovery never turned out to be such a big deal.

[00:31:55] Remember, though, that's why 2K could have been a big problem if it hadn't been caught beforehand and dealt with. Objective observers would, I think, do well to remember all of the many critical vulnerability discoveries like this one that did serve to clean up our archaeological code base before the bad guys had the chance to get in there and exploit it.

[00:32:23] The question is, of course, how long it's going to be before the bad guys get access to these models. Yes.

[00:37:29] I don't know. The router, hugging faces. And I know hugging face. You have to know that because hugging face has more than a million models. Yes. Where'd they come from? Yeah. People are just making them. Do you need them? No, no. Well, you need some. I don't know if I need the bad ones. We'll find out what that is in just a little bit.

[00:37:59] But first, a word from GuardSquare, our sponsor for this segment of security now. Now, are you a mobile app developer? You really want to listen up here. Mobile apps today are, and this is good news for you as a mobile app developer, an inescapable part of life. We use mobile apps for everything from financial services to healthcare to retail entertainment. And here's the thing.

[00:38:25] Users trust those mobile apps with their most sensitive personal data, especially in finance and healthcare, right? Unfortunately, a recent survey showed 72% of organizations have experienced a mobile application security incident in the past year. 92% of respondents reported rising threat levels over the last two years. I think you don't need a survey to tell you that.

[00:38:50] Meanwhile, attackers who want your user's personal data, I mean, that's the gold they're looking for, are they're constantly finding new ways to attack your mobile app. I'll give you one way that they do it. For instance, they will take your app. It's actually fairly trivial now with AI and Ghidra to reverse engineer it. Take it apart, repackage it. By the way, this is, I suspect what's happening with these AI models as well.

[00:39:17] Repackage in it and then distribute the modified app. They do it with phishing campaigns. Hey, we've got an update. Hey, you've got to get the latest version or sideloading or third-party app stores. There's all sorts of ways to do this. That's just one of many ways they're attacking your app. But you need to prevent this. You need to take a proactive approach to mobile app security. And by doing so, you can stay one step ahead of these attacks. And more importantly, most importantly, maybe maintain the trust of your users.

[00:39:48] And that's where GuardSquare, our sponsor, comes in. GuardSquare delivers mobile app security without compromise, providing advanced protections, both Android and iOS apps, combined with automated mobile application security testing to find vulnerabilities, and real-time threat monitoring so that you can gain insight into the attacks that are occurring in general and to your app specifically.

[00:40:15] Discover more about how GuardSquare provides industry-leading security for your mobile apps at GuardSquare.com. That's GuardSquare.com. We thank them so much for supporting our show and for the work they're doing to protect us as mobile app users and mobile app developers. GuardSquare.com. Steve, I've replaced the white shirt, as you can see, with a shirt. Ah, now we recognize you. I got this in Orlando when we were out there for the ZeroGator shirt. Yeah, this is from GatorLand.

[00:40:44] It's got gators on it. Yep. Nice. A little bit more recognizable. Not a white shirt, for sure. All right. Now I want to hear about this AI thing. Oh, boy. So last Friday, the NextWeb posted the news of an analysis of the large language models being hosted and offered at Hugging Face and Claw Hub. The news is not good. Yeah. Here's what they said.

[00:41:09] They said, the two most important software supply chains in artificial intelligence have been systematically compromised. Hugging Face, the repository that hosts more than a million machines. More than a million. What? It's amazing when you go there. It's amazing. I mean, there are, you know, the kind of maybe several dozen root AI models, but then people are creating their own spins of it and so forth. It's really incredible.

[00:41:40] Repository that hosts more than a million machine learning models used by virtually every AI company on the planet has been found to contain hundreds of malicious models capable of executing arbitrary code on the machines of anyone who downloads them.

[00:41:59] Claw Hub, the public registry for open clause AI agent skills, has been infiltrated by a coordinated campaign that planted 341 malicious skills designed to steal credentials, open reverse shells, and hijack AI agents for cryptocurrency mining. The attacks are different in technique, but identical in logic.

[00:42:26] Both exploit the implicit trust that developers place in shared repositories. Both use the infrastructure that the AI industry built to accelerate development as the vector for compromising it. Hugging Face has been aware of malicious models on its platform since at least 2024.

[00:42:50] When security firms, JFrog and reversing labs independently identified models containing hidden back doors. My turn to have a throat tickle. Sorry. Yeah, I'm just looking right now at Hugging Face at their model repository. And this is actually kind of stunning.

[00:43:15] They list 2,869,086 different models. My God. Yeah. Well, let's hope they have a good search engine. They do, actually. They have a very good search engine. Wow. And the thing is, it's not like ChatGPT alone. I mean, there are models to do all sorts of things. I have a specific model I use from Hugging Face that's just for text embedding. That's all it does. So, you know, and these are highly customized in many cases.

[00:43:45] So, very vertical application slices. Very vertical. Exactly. Yep. Yep. I mean, it's a great repository. This is really somewhat different, though, from the OpenClaw registry. But I'll let you talk about this because I know. So, they said, Hugging Face has been aware of malicious models on its platform since at least 2024, when security firms JFrog and Reversing Labs independently identified models containing hidden backdoors. The problem has not been contained. It has scaled.

[00:44:15] Protect AI, which partnered with Hugging Face to scan the platform's model library, and given its size, that's no small feat, has examined more than 4 million models and identified approximately 352,000 unsafe or suspicious models across 51,700 models. Hmm.

[00:44:45] Oh, I'm sorry. 352,000 unsafe or suspicious issues across 51,700 models. JFrog found more than 100 models capable of arbitrary code execution.

[00:45:02] The attack technique, known as null-if-AI, null-if-AI, null-if-AI, exploits Python's pickle serialization format, the standard method for packaging machine learning models.

[00:45:20] Attackers embed malicious Python code at the start of the pickle byte stream and compress the file using 7z rather than the default zip format, which breaks Hugging Face's pickle scan detection tool. Well, and that's just dumb that Hugging Face can't check 7z compression in addition to zip. The payloads are not subtle, they write.

[00:45:49] Security researchers have documented models that establish reverse shells, meaning that it connects out to a remote command and control server and says, what do you like me to do now? Connecting to hard-coded IP addresses, giving attackers direct access to the machine of anyone who loads the model. Others execute credential theft, exfiltrate environment variables, or download secondary malware to the user's machine.

[00:46:17] A data scientist who downloads what appears to be a legitimate model for a research project or production pipeline is, in some cases, handing control of their machine to an attacker. Hugging Face has responded by partnering with JFrog and WizSecurity to improve scanning capabilities. Remember that Google bought Wiz.

[00:46:42] JFrog's integration has eliminated 96% of false positives in malicious model detection. But the platform's open architecture, which is the source of its value to the AI community after all, is also the source of its vulnerability. Anyone can upload a model. The scanning catches known patterns.

[00:47:06] The attackers who designed Nullif AI built their techniques specifically to evade that scanning. Claw Hub, the registry for OpenClaw's AI agent ecosystem, faces a different but related problem. OpenClaw has grown to 3.2 million users and attracted partnerships with OpenAI,

[00:47:30] but its skill registry has become a target for attackers who understand that an AI agent executing a malicious skill has access to whatever the agent has access to, which in enterprise environments can mean databases, APIs, internal networks, and cloud credentials. In other words, we're giving agents, in order for the agent to have agency, we need to give it control and access to things.

[00:48:00] Unfortunately, the malicious skill inherits that. Coy Security audited all 2,857 skills, thank goodness that's a manageable number, on Claw Hub, and found, unfortunately, 341 malicious entries.

[00:48:21] Of those, 335 were traced to a single coordinated operation called Claw Havoc. Separately, SYNC's, you know, S-N-Y-K, SYNC's toxic skills research, examined the broader ecosystem and found that 36% of all, say, better than one out of three,

[00:48:47] 36% of all AI agent skills contain security flaws, with approximately 900 skills, roughly 20% of the total, classified as malicious. So, one in five, deliberately malicious. 30 skills from a single author were silently co-opting AI agents for cryptocurrency mining. You know, which, right, makes sense.

[00:49:15] You got a super powerful GPU, you got, wow, that AI is really working hard for me. No, it's working hard mining cryptocurrency for somebody else. They write, the Claw Hub attacks are particularly dangerous because of the nature of AI agent architectures. The rise of model context protocol and similar standards in the agentic era has created a new category of software supply chain

[00:49:43] in which AI systems autonomously select and execute tools from external registries. A compromised skill does not require a human to click a link or open a file. It requires an AI agent to select the skill as part of its workflow, at which point the malicious code executes with the agent's permissions.

[00:50:10] The Hugging Face and Claw Hub compromises are the AI-specific manifestation of a supply chain attack pattern that's been accelerating across the entire software industry. In March of 2026, the Light LLM package on PyPI, or PyPI, was compromised, potentially exposing half a million credentials, including API keys for Meta, OpenAI, and Anthropic.

[00:50:40] Meta froze its AI data work after the breach put training secrets at risk. In April, a Bitwarden, as we know, we covered it, command line instruction package on NPM was hijacked for 90 minutes with a payload specifically designed to harvest credentials from AI coding tools, including Cloud Code, Cursor, Codex CLI, and Ader.

[00:51:06] Days later, the PyTorch lightning package was compromised for 42 minutes with a credential-stealing payload from the mini-Shi-Haloud campaign. The European Commission itself was breached after attackers poisoned Trivi, and we've talked about that, an open-source security scanning tool,

[00:51:29] demonstrating that even the tools designed to detect supply chain attacks can become vectors themselves for them. The United States Department of Defense published formal guidance on AI and machine learning supply chain risks in March of 2026, acknowledging at an institutional level that the AI software ecosystem has become a national security concern.

[00:51:56] The common thread is speed. The PyTorch lightning compromise lasted 42 minutes. The Bitwarden CLI hijack lasted 90 minutes. The light LLM attack window is estimated at hours. These are not persistent campaigns that defenders have weeks to detect. They're brief, targeted insertions that exploit the automated dependency resolution systems

[00:52:25] that modern software development relies on. A developer who runs a package install at the wrong moment downloads the compromised version. The window closes, but the damage is done. The AI industry has invested hundreds of billions of dollars in model training, inference infrastructure, and application development. The investment in securing the repositories through which that software is distributed

[00:52:54] has been a fraction of the total. Hugging Face has partnered with security firms. Claw Hub has implemented basic moderation. Package registries have added two-factor authentication requirements. None of these measures has presented the attacks documented above. State actors can already produce AI-powered malware that evades conventional detection,

[00:53:22] and the supply chain attacks on AI repositories represent a natural evolution of that capability. The models and skills hosted on Hugging Face and Claw Hub are consumed by systems that make automated decisions, process sensitive data, and operate with elevated permissions. A compromised model in a production AI pipeline is not equivalent to a virus on a personal computer.

[00:53:50] It is a backdoor into an automated decision-making system that the organization trusts precisely because it appears to be a legitimate component of its AI stack. The fundamental problem here is architectural. The AI industry built its development infrastructure on the same open registry model that has defined software development for the past two decades.

[00:54:17] Centralized repositories where anyone can publish, automated tools that download and execute code from those repositories, and a culture of trust that treats popular packages and models as implicitly safe. The difference is that AI models are not just code.

[00:54:39] They're serialized objects that execute during deserialization, a property that makes pickle-based models inherently more dangerous than traditional software packages because the malicious code runs the moment the model is loaded before any human has a chance to inspect it. The AI supply chain is now the most attractive target in the software security space. The repositories are trusted.

[00:55:09] The consumers are automated. The payloads execute on load. And the industry that built these systems is spending its security budget on model alignment and prompt injection, while the infrastructure through which the models are distributed remains, in the assessment of every major security firm that has examined it, comprehensively compromised.

[00:55:33] So, Leo, I would say that the caution and trepidation you felt and shared when you were first considering turning open claw loose in your world was likely warranted. Yes, I think it was.

[00:56:21] But anybody can write a skill. It's just plain text. And so, I mean, I guess the skill involved is how you insert the, you know, Shai Halud style, you know, Bitcoin stuff. But it's not complicated. And so that's why I think you're going to see in these registries for skills. I never use a skill from the public. I look at skills. What I often do, and I would recommend people do this, is I point my… Use them as a training base?

[00:56:51] Yeah. I point my assistant. I say, here's a GitHub repository for a skill. Assess this. Tell me what you think of it and how we could apply it. And then let it write a skill, which I will then check. And that actually works quite well. I've built basically my own open claw from scratch with just the pieces that I want. And it's a lot… I love that you said how we could apply it because you weren't talking about you and Lisa. You were talking about you and the AI.

[00:57:20] Me and Claudia, my good friend. It is so difficult not to think of this thing as an entity. I mean, it is just astonishing. Anyway, I wanted to share this with our listeners because I'm certain that we have many listeners who are enjoying playing around with and experimenting with and perhaps even deploying systems or solutions using these openly available AI models.

[00:57:49] Please, please, please be careful. Because one of the problems here is this makes it… The way this has been built and deployed makes it so easy to use this stuff. That just… I mean, that alone should be a cause for raising a red flag in the minds of any security-aware person.

[00:58:14] So, you know, as you are, Leo, you are looking at this and not just saying go. You're saying, you know, let's take a look at it. What should we do? Well, you've taught me, Sensei, over the many years that we have done this. I'm glad it's sunk in. That's good. But again, it's so easy in a moment of enthusiasm to say, well… And you were tempted, right? When open claw happened, it's like, ooh, should I? Shouldn't I?

[00:58:42] But, you know, somebody else is not going to be, you know, who hasn't been sitting here for the last 21 years with me. It's going to go, hey, this is great. Go. Right. Yeah. If you have any nervousness about it, trust your instincts because you're right. Yes. Basically. So, there's some good news on the horizon.

[00:59:02] The word is that the original decade-long CISA, that's C-I-S-A, 2015, stands for Cybersecurity Information Sharing Act, which, as we have talked about on a number of occasions, expired last year because it had a decade-long life from 2015 to 2025.

[00:59:27] Which was then temporarily extended until this coming September, is now in the process of receiving its much-needed long-term reauthorization. And remember that this is what allows private sector enterprises to share their cyber intelligence with the government without fear of any legal blowback or reprisals. So, this gives them cover.

[00:59:55] And we've heard from CEOs and CIOs who've been saying, you know, we really need to share some stuff that we have, like, it's important, but we can't because, you know, we can't risk having ourselves, you know, taken to court. So, anyway, hopefully another decade worth of coverage is coming soon.

[01:00:26] A number of our listeners pointed me at the news that Microsoft's Edge browser is doing very little, much less than it could to protect its users' passwords. A posting from the SANS Institute, which I've enhanced a bit, reads, yep, it's for real.

[01:00:51] The posting wrote, this started with a post in X, which highlighted research by, and we have an at sign handle that's clearly hackerized living off the LAN with, you know, ones and O's and zeros and threes. I don't know. Is he a 12-year-old? But that person did find this issue.

[01:01:14] The SANS Institute posting said, Edge stores all of your browser passwords in clear text. Oh, great. Okay. Yep. Oh, great. Even if you have not used them in this session, you know, just in case, he writes. You might want them. He said, I figured it couldn't be that easy, right?

[01:01:44] But like so many things, yes. Yes, it was. To reproduce this, open Edge. Don't browse anywhere. Just open it. He says, flip out to task manager, search for Edge, then expand that task. Highlight the browser subtask, right-click and choose create memory dump. Navigate to where the DMP file is stored.

[01:02:11] If you have not used strings before, you're in for a treat. Strings is, of course, just part of most Linux distros, but you can easily get a copy for Windows as part of MS sysinternals. Now let's look for passwords. You could use strings and look for known credentials. Just search for a known password and you will certainly find it.

[01:02:34] Or you can take advantage of the format of the saved data, which is the URL of the site, followed by its protocol, meaning like HTTPS probably. Then a space. Then the user ID, a space, and the password. All of that for the site. He says, so searching for TLD protocol, right? For example, google.com immediately followed by HTTPS.

[01:03:03] He says, which in most cases is like, or just com, just C-O-M, HTTPS with no spaces. He says, we'll find them and they'll all be in one nicely formatted group, no less. The command for that will be strings space hyphen N space eight space MS edge dot DMP. And then a space and then a vertical bar for piping.

[01:03:29] Then find double quote, C-O-M, HTTPS double quote, and then hit enter. Bang. He says, it really is that easy. And the ironic thing, to view these same credentials in the browser, there's a whole security theater process where Edge wants your biometrics as proof before disclosing even the user ID and site names.

[01:03:58] You know, for security. All the while, the whole shot is there in clear text, free for the looking. Also, as noted in the X post, Microsoft classifies this as intended behavior. I'm not sure what manager or lawyer, he writes, decided that. Hopefully it wasn't anyone in their security team.

[01:04:21] Any logged in Windows Edge user can dump all of their stored Edge credentials with no additional rights, which means any malware that the user executes also has access to all of those credentials for the asking. But he says, not to worry, right? It's intended behavior. Remember, this is what Chrome did for a while.

[01:04:51] Or kept it in plain text. Well, it's Chromium. Edge is Chromium-based. I don't think they do now, though. That's what's surprising. And he said, it's intended behavior. If what's intended is also to get me to use Firefox or Chrome, it's working. Gosh. So, and I did, upon that coming to the attention of some of the guys in our Security Now News group, someone thought, really?

[01:05:20] And did it. And he's like, oh, crap. Yes. That's terrible. There's all of my domains, usernames, and passwords. Basically, you get that. You can log in as that person anywhere. Wow. Crazy. Wow. Okay. We're at an hour. We're going to do some feedback, Leo. Let's take another break, and we will hear from two of our listeners. Absolutely.

[01:05:47] If I can figure out what button I need. Oh, there. Okay. I need to press. It is time to talk about Doppel. As you pointed out a couple weeks ago. Doppel. As in Ganger. Yes. As Steve said. Doppel, our sponsor for this segment on Security Now. Now, maybe that message you just got, maybe that is an urgent message from your CEO.

[01:06:14] Or could it be a deep fake trying to target your business? I think these days, more likely the latter. AI can impersonate trusted individuals. I mean, down to the voice. Down to the... They can do it in video now. Now, Doppel's platform illustrates how frequently users fall for these phishing attempts. They call it vishing, right? With a V. In voice call simulation deployments, target users...

[01:06:43] They didn't know they were target users, right? This was a test. They spent six minutes conversing with a deep fake. And then afterwards, when they talked to them, 100% of them thought, oh no, that's human. It's not. It was an AI. But they didn't believe it. But Doppel is the AI-native social engineering defense platform. Doppel strengthens human risk management by training employees to recognize deception,

[01:07:11] while their digital risk protection detects and disrupts attacks across every channel. As attackers turn to AI to power increasingly sophisticated strikes, Doppel uses AI to fight back with automated takedowns, multi-channel coverage. Yeah, because it's not just email anymore, is it? It's text, it's Slack, it's voice. And Doppel builds AI defenses that put intelligence into every fight.

[01:07:40] Intelligence in your users, too, that builds intelligence with them. Doppel works relentlessly to protect people, brands, and trust. Doppel. D-O-P-P-E-L. Best-in-class integrations and partnerships to seamlessly integrate into the existing security stack you're using. So that's nice. And Doppel's industry awards and testimonials speak for themselves.

[01:08:04] They're recognized as a winter 2026 G2 leader in users most likely to recommend, momentum leader, and best support. Kind of the big three. Join hundreds of companies already using Doppel to protect their brand and people from social engineering attacks. It's out there. It's happening right now. You need Doppel. Doppel. Outpacing what's next in social engineering. Learn more at doppel.com.

[01:08:31] That's D-O-P-P-E-L.com. Doppel. It's a shame we have to do this now, but the attacks are getting so sophisticated. It's really mind-bending. Yeah. The ultimate consequence of the cat and mouse back and forth with escalation on both sides. Yeah. That's actually right. Yeah. On we go with the show, Mr. Gibson.

[01:08:59] So Todd Whitaker, our listener, writes, Steve, I thought this might be of interest. For security now. The rival security group has a thoughtful follow-up on the Claude Mythos free BSD exploit story, arguing that Mythos may not have been quite as creative as the initial coverage suggested. And he has a link to their whole posting.

[01:09:24] He writes, their claim, as I understand it, is not that the result is unimportant. It is that the vulnerability, the prior fix pattern, and perhaps even the exploit-relevant structure may already have existed in the model's training data.

[01:09:43] The free BSD issue appears closely related to CVE 2007-33999 occurring in MIT Kerberos. The same general RPC-SEC-GSS validation logic, same stack buffer overflow pattern, and a strikingly similar bounds check fix.

[01:10:11] So Mythos may have discovered something genuinely dangerous, but perhaps by recombining known historical material rather than reasoning from first principles in the way many of us initially imagined. He writes, that still seems worrying, just in a different way. If advanced models can rediscover old vulnerability patterns embedded in the fossil record of open source code,

[01:10:41] then attackers may not need models to be brilliant. They only need them to be tireless, well-tooled, and good at recognizing dangerous old ideas in new places. And I'm going to interrupt because Todd has a little bit more to say about something different, but I just want to say I completely agree with that.

[01:11:02] As our understanding of computer science has evolved, one of the things that's happened, and it's kind of gone into the parlance now of computer science, we've come to notice patterns in the solutions to problems.

[01:11:18] You know, they're like a short and small abstraction away from the concrete solution where we see that many different such concrete solutions can be grouped together by their sharing of a common underlying pattern. So what rival security observed was mythos preview, finding what we might describe as a flawed design pattern,

[01:11:47] a common type of mistake that coders have been making through the years, which winds up being a natural sort of mistake to make due to the underlying architecture of the computer behavior that we're programming. We all know that today's large language models excel at pattern discovery. Probably more than anything, that's what they are.

[01:12:12] So we would expect that if someone somewhere made a similar mistake and its correction that had been captured in the model's training corpus, then it would indeed be able to make the connection. So I'd say that this is an interesting and useful observation about the underlying way, in this instance, mythos discovered, maybe rediscovered, you know, the newer, similar patterned flaw.

[01:12:42] But I don't, like Todd, I don't see anything taking away from the fact that, you know, as Yoda might say, discovered that flaw it did. Yeah, I mean, it's not like it knew about the flaw, it just recognized the pattern. I mean, yeah, it said, oh, this seems familiar. It's like if you recognized a buffer overflow. I mean, exactly. Yeah, exactly.

[01:13:05] So his note continues with some interesting observations from his own background about AI as a computer science educator. He writes, I would also be interested in your broader take on what this means for computer science education and the profession.

[01:13:24] My current working view is that the most productive human AI collaboration in software engineering depends on advanced judgment, architecture, design patterns, there's patterns, threat modeling, failure modes, invariance, trade-off analysis, and knowing when the AI's answer is superficially plausible but structurally wrong.

[01:13:51] The problem is not that students must learn the old ways before they're permitted to use the new tools. He says that's just reframing the old learn assembly before C argument. The real issue is that AI makes coding cheaper while making judgment more valuable.

[01:14:13] He said, to supervise AI-generated software, a person needs mental models. How state behaves. Where abstractions leak. How protocols fail. Why concurrency is treacherous. How memory and parsing bugs become security bugs. And why a working MVP may still be architecturally unsound.

[01:14:39] Those mental models do not emerge from prompting alone. If we let AI collapse the difficult apprenticeship too early, we may produce developers who can ask for software but cannot reliably tell whether the software they received is safe, coherent, or professionally defensible.

[01:15:04] He says, I'm writing from my personal email, but my day job is in computer science education. So this one lands close to home. I spend a fair amount of time thinking about what we should still teach humans when machines can increasingly produce the code. So, cool feedback, Todd. Thank you.

[01:15:27] I don't think I've seen any more coherent and clear description of the AI versus human coding question. And I love his one line. The real issue is that AI makes coding cheaper while making judgment more valuable. And I think that captures where we're headed in computer science education and vocation.

[01:15:50] In the same way that any higher level language lifts its coder away from the grubby details of the specific underlying computer hardware, the use of coding-trained large language models clearly lifts its users from the grubby details of the way computers are applied to problem solving.

[01:16:14] As many, you know, never before programmed anything users are discovering, they're now able to simply ask for what they want the computer to do. And the LLM will almost magically produce a potion that does that. But there are clear limits to what can be asked for.

[01:16:37] We saw a perfect example of such limits last week when those bad guys who had created that credit card clearing web portal apparently just forgot to ask for authentication to be added. Whoopsie. What we're seeing rapidly evolve during the rush to use AI for code generation is that for AI to be applied to the creation of any very large and complex solution,

[01:17:07] a solution architect is still required. No large problem can be dropped whole into AI's lap. At least not today, not yet. And I don't know when. Instead, for now, today, a solution architect who's trained and experienced in the application

[01:17:31] of the various higher-level solution abstractions that have been developed over the years of true computer science needs to carefully decompose the larger problem into much smaller, individual, safely codable modules. These solution architects are the true core of the science of computing.

[01:18:00] You know, coding is just their implementation. And these are the sorts of things, you know, that Donald Knuth and other scholars of the art have spent their lives exploring and documenting. So, yeah, I think, Leo, and this is what you've talked about, like the way you're now approaching the application of AI is because you understand

[01:18:24] about the way computers are applied, you're breaking the problem down into pieces that you intuit AI is able to do the grunt work on, but you're, you know, giving it the interfaces that it needs. For the various pieces of individual grunt work. Yeah, and I'm finding more and more, well, I do the coding for as a basis for things like

[01:18:53] cron jobs, like things that are going to run over and over again. And then a lot of what I'm using AI for now is text-based stuff. I had it plan our itinerary for Hawaii, for instance. And if you give it the basis, the information it needs, I have it use my Obsidian journals and things. It does really quite a good job. I sent it to my travel agent.

[01:19:19] I don't know how thrilled she was with the generated recommendations. I wanted her to say whether they were good or not, but I realized she might feel like it was kind of taking her job a little bit. I think it's probably going to. Yeah. Yeah. I mean, there's something that she does that no AI could do, which is the relationship she has with the various vendors. Yes. Yes. And that's very, you know, that's human. And only a human can do that.

[01:19:48] And things that she has heard from her other clients. Yeah. Where it's like, you know, I heard about this, you know, you want to make sure you spend more time at this port. Because. I did. I reassured her. I said, you know, this is, this is a nice starting point, but it can't duplicate what you do as a human being. And I think that's really the lesson that all of us should learn to calm down about AI is that we, we still need humans. Humans add something that no way I can, can, can do.

[01:20:17] Or what I think we'll ever be able to do. Yeah. What I have found, I remember very early on, I shared one of my prompts where I was, you know, I went on at some length and I remember, you know, you were surprised that I was talking to it as much. Yeah. Yeah. But if the more language you give it, because it is a language engine, the more language, you know, descriptive language you give it, the more it has to work with. Yes. Yeah. I've found I'm writing more and more detailed specs. Yeah. Yeah.

[01:20:47] And plans than, than ever, because it does help it be more accurate if you're very clear about what you want. Yeah. Okay. Next listener, Randy Crum says, hi, Steve. In episode 1077 last week, you mentioned you think companies that have closed source software should move quickly to utilize Anthropix mythos, Claude security, or similar tools as they emerge. Their closed source code is only closed source to the outside world.

[01:21:17] I think you glossed over the risk that using these online AI tools potentially exposes your closed source code to the world. They have privacy and security tools in place. Sure. But their motivation is financial. They have a setting to disallow using your AI conversations to train their AI models, but you have to trust that they're actually following that setting for the same reason you trust Apple

[01:21:44] with your data more than Facebook or Google. The use of online AI tools to review closed source code is a risk. A security breach internal or external could be devastating to a software company that has their code exposed. He writes, I've been experimenting with LM studio to run local offline open source LLM models for use with proprietary data. He says, friends note, I work with client data, not code.

[01:22:14] The hypothesis is a local offline LLM can be safely used with confidential internal proprietary data or code. These LLM models are usually not as current as the online tools, but they catch up quickly. Also, they're not as flashy, newsworthy or marketing hyped as strongly as the major online tools.

[01:22:39] What are your thoughts on the risk of exposing proprietary code or data by using the major online tools? Listeners since episode one, thanks for everything you and Leo do. So Randy's right. I did gloss over those risks, so I'm glad he brought it all up. And it's not at all that I meant to downplay them. Given everything we know about cloud breaches, network data interception and decryption and

[01:23:06] so on, even if the LLM provider did nothing wrong and made no mistakes, shipping highly valuable source code outside of a company's perimeter creates some risk. However, that said, how many firms are already doing just that by using GitHub? You know, I think that's insane myself. Yet it has become common practice to use GitHub for highly proprietary source code management. I'm not doing that.

[01:23:36] So perhaps my view is skewed. But it does mean that the company's crown jewels are already exposed outside. Randy correctly notes that sending the code up into the cloud for an LLM to rummage around in poses another level of danger. No question about it. So I completely agree with that in principle.

[01:23:57] So the use of local models, which I have absolutely no doubt we will someday see much more in the future, makes a great deal of sense once they become as capable as what's available in the cloud. And at this point, Leo, I heard you mentioning, I didn't realize that there are now laptops being sold without RAM because memory has become so expensive. It's like, get your own RAM.

[01:24:26] Here's what you can plug it into. I think maybe some people think, some companies think, well, you might have some leftover RAM lying around or whatever, but they just can't get the RAM. So they want to still sell something. Or maybe they think, well, he'll take it from the previous laptop and put it in this laptop. Right. Yeah, exactly. Wow.

[01:24:45] And so anyway, given the insane appetite that data centers have for GPUs and things that run AI, seems to me it's going to be a long time before we're able to buy things ourselves that also run AI. Because we're competing with the data centers that are able to purchase all of the next year's production capability. I mean, it's crazy. It's crazy. Okay.

[01:25:15] So I want to plow now into what DigiCert is doing. We got two breaks left. Let's take one now, even though we just did one. And then I will break in the middle of this DigiCert conversation for our final one. Good. Good. Perfect. Perfect. This episode brought to you by OutSystems. Speaking about AI, they're the number one AI development platform. But they solve a lot of these little issues that we've been talking about.

[01:25:43] OutSystems helps the businesses bridge the enterprise gap to their agentic future. And I know, you know, I think a lot of businesses want to do this, but they're very nervous, as they should be, about the quality of the code they're going to get, about whether it'll be useful, about whether it's good for just little things, or whether you can do big apps. OutSystems is here. They've been doing this for 20 years. They know how to do it. They know how to do it for business.

[01:26:09] They help your business bridge that gap and get to your agentic future where the constraints of the past give way to unlimited capacity and scale. OutSystems enables your business to build AI agents that actually do work, such as take actions, make decisions, and integrate with data. It's not a chatbot. It's not just answering questions. It's doing work.

[01:26:36] And OutSystems provides the only AI development platform that is unified, agile, and enterprise-proven. Let me explain. First of all, it's unified because you build, run, and govern apps and agents on that single OutSystems platform. It's agile because now you can innovate at the speed of AI, very important, without compromising quality or control. And it is enterprise-proven.

[01:27:01] They've been trusted by enterprises for mission-critical AI applications and durable innovation. OutSystems is the secret weapon behind the world's most successful companies, not just for those little one-shot apps. They are for massive, complex systems that run banks, insurance companies, and government services. OutSystems even helps companies with aging IT environments bridge the gap to the AI future without a rip-and-replace nightmare.

[01:27:30] OutSystems provides the safest, fastest way for an enterprise to go from, we need an AI strategy, to we have a functioning AI application. Stop wondering how AI will change your business and start building the agents that will lead it. Visit OutSystems.com slash twit to see how the world's most innovative enterprises use OutSystems to build, deploy, and manage AI apps and agents quickly and cost-effectively

[01:27:58] without compromising reliability and security. And that's fundamental. That's OutSystems, O-U-T-S-Y-S-T-E-M-S dot com slash T-W-I-T to book a demo. We thank OutSystems so much for supporting Steve and Security Now. And you support us when you go to that address, OutSystems.com slash twit. OutSystems.com slash twit. All right, let's talk. Okay.

[01:28:26] The first I learned of some trouble was from someone posting to GRC's Security Now news group with firsthand experience. Oh. Yep. Peabody, which is his handle, actual name is George.

[01:28:42] He wrote, this morning, Windows Defender told me it had discovered a severe rootkit on my Windows 10 laptop called Win32 Cartagent A!DHA. Now, okay, so consider that. Windows Defender tells you, you've got a rootkit. It's like, what?

[01:29:11] So you don't take that lightly, right? He says, which it has quarantined. He wrote, searching online tells me this is happening on both Windows 10 and 11 computers worldwide. And one hash involved is that of a legitimate DigiCert certificate. This is all above my pay grade, but I'm going to leave things alone for a while and see what happens.

[01:29:40] Turns out he was right. He said, apparently, lots of people are reinstalling Windows because of this. But I think that's super premature at this point. Right again. He said, my guess is this is a gift from Microsoft, which they will admit to shortly. And if you reformatted your drive, they'll apologize for the inconvenience. Dripping with sarcasm there.

[01:30:10] And unfortunately, their apology left a lot to be desired. I was unimpressed. So later that same day, this was this past Sunday, Bleepy Computers, Lawrence Abrams, was all over this and was providing answers. Lawrence's Lawrence's Lawrence headlined his news writing.

[01:30:32] Microsoft Defender wrongly flags DigiCert certs as Trojan colon win 32 slash certigent dot a exclamation point DHA. So here's what he wrote.

[01:30:50] He said, Microsoft Defender is detecting legitimate DigiCert root certificates as and then that that Trojan name resulting in widespread false positive alerts. And in some cases, removing certificates from Windows, removing their root certificates, by the way.

[01:31:10] According to cybersecurity expert Florian Roth, the issue first appeared after Microsoft added the detections to a Defender signature update on April 30th. Today, administrators worldwide began reporting that DigiCert root certificate entries were flagged as malware and on affected systems removed from the Windows trust store.

[01:31:39] Okay, so hold on. Just to be clear what a disaster this was. As we know, root certificates anchor the chain of trust for everything that chains down to them. With them removed from a system, nothing that chains down to them will be trusted despite having been trusted just moments before. That's the way the system works.

[01:32:08] And no one has come up with a better idea for validating signed code. Those two certificates are DigiCert's code signing roots. And for example, all of GRC's, my signed apps, are anchored by one of the two of those that were being deleted.

[01:32:29] So the mistaken removal of those two code signing roots from the Windows trusted root store automatically and instantly renders every app that was ever signed by a DigiCert certificate.

[01:32:47] You know, who is the, as I said before, the industry now, now the industry's number one certificate authority renders every one of those invalid and untrusted by Windows. Huge, huge mess. Bleeping Computer continues writing,

[01:33:07] These false positives have led to concern, gee, you think, among Windows users with some thinking their devices were infected and reinstalling the operating system to be safe. Microsoft has reportedly fixed the detections in security intelligence update version 1.449.430.0.

[01:33:32] And the most recent update is now 1.449.431.0. Actually, I think that should be .1. Anyway, reports on Reddit indicate that the fix also restores previously removed certificates on affected systems. Well, that's nice. So, yeah, thank goodness for that. And it's not as if Microsoft had any choice, right, about putting them back.

[01:33:59] It would have been a true disaster if there weren't some immediate means for reverting the specious removal of DigiCert's perfectly valid root certificates. As we'll see in a few moments, even though DigiCert did suffer a breach which caused it to misissue a handful of code signing certificates,

[01:34:24] at no point was the removal of any of their root certificates ever warranted. I mean, that's just nuts. I hope Microsoft will put some safeguards in place to prevent such a thing in the future. Bleeping Computer continues. The new Microsoft Defender updates will automatically install, and Windows users can manually force an update by going to Windows Security,

[01:34:50] Virus and Threat Protection, Protection Updates, and clicking on Check for Updates. After publishing this article, wrote Lawrence, Microsoft confirmed that the false positives were linked to detections for compromised certificates from a recent DigiCert breach. Well, linked, but completely ridiculous to have deleted the roots. Microsoft told Bleeping Computer, here it comes.

[01:35:20] Quote, this is Microsoft speaking. Following reports of compromised certificates, Microsoft Defender immediately added detections for malware in our Defender antivirus software to help keep customers protected. Earlier today, we determined false positive alerts were mistakenly triggered and updated the alert logic.

[01:35:50] Microsoft Defender suppressed and cleaned up the alerts for customer environments. Customers should update to security intelligence version, and then we get that same version number, or later, But do not need to take additional action. In other words, don't reinstall Windows for these alerts.

[01:36:13] We've notified affected organizations and recommended administrators look for more details in the Service Health Dashboard, the SHD, within the M365 Admin Center. Unquote. Huh. Okay. Well, that's an entirely unsatisfying answer from Microsoft.

[01:36:36] But I suppose, given what Microsoft has become, it's the best we're going to get and the best we can expect. Nothing they wrote is untrue, but neither should it satisfy anyone who would have appreciated hearing them say something like, Quote,

[01:36:55] In response to reports of compromised certificates, Microsoft Defender was a bit overzealous and mistakenly removed some related certificates that should have remained. Microsoft Defender was immediately updated to cure that behavior and has replaced any certificates that were mistakenly deleted. You know, is that so difficult to say? It shouldn't be.

[01:37:21] Just wait till you see how thoroughly DigiCert took full responsibility for their part in this drama. Lawrence's reporting continues, writing, The false positives occurred shortly after a disclosed DigiCert security incident that enabled threat actors to obtain valid code signing certificates used to sign malware. The DigiCert incident report explained, Quote,

[01:37:49] A malware incident targeted a customer support team member. Upon detection, the threat vector was contained. Our subsequent investigation found that the threat actor was able to procure initialization codes, which I'll explain in a sec, For a limited number of code signing certificates, a few of which were used to sign malware.

[01:38:13] The identified certificates were revoked within 24 hours of discovery and the revocation date set to their date of issuance. As a precautionary measure, all pending orders within the window of interest were canceled. Additional details will be provided in our full incident report, unquote. So that's a small sample of what good disclosure looks like. Lawrence continues,

[01:38:42] According to DigiCert's incident report, attackers targeted the company's support staff, meaning DigiCert's support staff, in early April by creating support messages containing a malicious zip disguised as a screenshot.

[01:39:01] After multiple blocked attempts, one support analysis device was eventually compromised, followed by a second system that went undetected for a time due to an endpoint protection sensor gap.

[01:39:17] Using access to the breached support environment, the hacker used a feature in DigiCert's internal support portal that allowed support staff to view customer accounts from the customer's perspective. While limited in scope, this access exposed initialization codes to previously approved but undelivered EV, you know, extended validation, code signing certificate, or code signing certificates.

[01:39:47] DigiCert explained, DigiCert explained, Possession of an initialization code combined with an approved order is sufficient to obtain the resulting certificate. Since the threat actor was able to obtain these two pieces of information for a finite set of approved orders, they were able to obtain EV code signing certificates across a set of customer accounts and CAs.

[01:40:15] So, great explanation there. Lawrence says, DigiCert says, It revoked 60, 6-0, code signing certificates, including 27 linked to a Zong Stealer malware campaign. DigiCert explained, 11 were identified in certificate problem reports provided to DigiCert by community members linking the certificates to malware,

[01:40:44] and 16 were identified during our own investigation. This aligns, writes Lawrence, with earlier reports from security researchers who had observed newly issued DigiCert EV certificates used in malware campaigns and reported them to DigiCert, which of course, you know, that's the nightmare scenario, right? I mean, all of the reasons I had to jump through all those hoops in order to get myself

[01:41:12] an EV certificate, actually not even an EV, just a standard validation certificate, is what all of this other mechanism is designed to prevent from happening. Researchers including SquibblyDoo, Malware Hunter Team, and Gonksha reported that certificates issued I should make you read Hacker Handles every week. Please go.

[01:41:41] SquibblyDoo says this? Okay, I'm going to go with it. SquibblyDoo, that's right. Issued to well-known companies such as Lenovo, Kingston, Shuttle, now these are the companies to whom these stolen certificates were issued, right? Lenovo, Kingston, Shuttle, Inc., Pallet Microsystems were all used to sign malware. So, question, what do Lenovo, Kingston,

[01:42:11] Shuttle, Inc., and Pallet Microsystems have in common? Posted, SquibblyDoo on X, EV certificates from these companies were issued and used by a Chinese crime group, Golden Eye Dog, and that's an APT known as Q-27. The malware in this campaign is named Zongstealer, though analysis indicates it may be more like a remote-access Trojan than an Infostealer.

[01:42:41] The researcher says the malware was distributed through the following attacks. Phishing emails deliver a fake image or screenshot, a first-stage executable that displays a decoy image, retrieval of a second-stage payload from a cloud storage such as AWS and the use of, wait for it, signed binaries and loaders including components tied to legitimate vectors or vendors. So,

[01:43:11] trusted because signed by DigiCert. After DigiCert disclosed the incident, the researcher said the incident report explains how the certificates used in these malware campaigns were obtained because, like, clearly illegitimate. It should be noted that the certificates flagged by Microsoft Defender are root certificates in the Windows Trust Store and do not match

[01:43:40] the revoked DigiCert code-signing certificates used to sign malware. Okay, so that's the great reporting posted Sunday before last by Bleeping Computers founder Lawrence Abrams. Security industry experts have been citing DigiCert's upfront incident report as a model of how this should be done. Starting 21 days ago,

[01:44:10] DigiCert began issuing a series of incident reports with each succeeding report updating the previous one with the final report being posted seven days ago, exactly one week ago. DigiCert named this event, this final event, Endpoint 2, which is, that's the system where this bad guy was not immediately discovered.